AI in healthcare is reshaping how decisions are made, but it’s a balancing act between reducing costs and maintaining ethical standards. Hospitals are adopting AI-powered systems to improve diagnostics and treatment plans. These advancements often rely on real-time biomarker monitoring to provide precise data. These systems can cut expenses, save time, and expand access to care. However, they also raise concerns about equity, transparency, and bias.

Key takeaways:

- Cost-focused AI: Saves millions per hospital over a decade, improves efficiency, but risks reinforcing disparities.

- Ethics-focused AI: Ensures fairness and trust by addressing biases but requires higher initial investment and ongoing oversight.

- Challenges: Real-world performance gaps, biases in datasets, and data integration hurdles.

To succeed, healthcare systems must balance financial efficiency with ethical responsibility, ensuring AI benefits all patients equally.

1. AI Health Systems That Focus on Cost Reduction

Economic Impact

AI designed to cut costs is proving its worth by automating tasks and streamlining resources. Take, for example, a U.S. hospital simulation conducted in January 2025. Researchers modeled 20 hospitals treating 20 patients daily over a decade. The findings? AI-driven treatment systems saved each hospital over $2.3 million by improving clinical efficiency and reducing diagnostic labor. By Year 10, these systems saved the equivalent of 15.2 hours of human labor per day, compared to the initial 3.3 hours daily[4].

The savings are even more striking in specific medical fields. AI-assisted colonoscopies could save $149.2 million annually in Japan and $85.2 million in the U.S.[3]. In Singapore, AI used in the national diabetic retinopathy screening program (2024/2025) cut per-patient costs by up to 19.5%, achieving an Incremental Cost-Effectiveness Ratio (ICER) as low as $1,107.63 per Quality-Adjusted Life Year (QALY)[3]. For medication management, the return on investment has reached an impressive 12.4:1[3].

Equity and Accessibility

The benefits of cost-saving AI go beyond financials, offering solutions to critical access challenges. In the U.S., about 77 million people - roughly 24% of the population - live in areas lacking adequate primary care services[8]. By automating routine screenings and enabling remote monitoring and post-surgery care, AI systems can extend specialized care to these underserved communities.

However, focusing solely on cost can sometimes backfire. A 2019 study by Obermeyer revealed that a widely used hospital algorithm underestimated the health risks of Black patients compared to White patients with identical conditions. The algorithm relied on "annual cost of care" as a measure of health complexity. Since systemic factors lead to lower healthcare spending on Black patients, the algorithm inaccurately assessed them as healthier, requiring them to be significantly sicker to receive the same care[5]. This example underscores how cost-focused AI can unintentionally reinforce inequalities, highlighting the importance of evaluating long-term clinical outcomes alongside cost metrics.

Long-Term Outcomes

AI systems aimed at cutting costs can also improve long-term health by enabling earlier interventions, particularly in fields like oncology and cardiology. This can lead to better Quality-Adjusted Life Years and lower the financial strain of managing chronic diseases[3]. However, static models often overestimate these benefits. As discussed earlier, performance decay in real-world settings raises questions about whether the anticipated savings will fully translate into the complexities of healthcare practice.

Integration and Interoperability

For these financial and clinical benefits to materialize, smooth integration is critical. Keeping implementation costs low is equally important. For instance, AI for colonoscopy must keep unit costs near $19 per procedure to remain viable. Similarly, AI-guided lung cancer screenings and mammograms must stay under $1,240 per scan and $318 per mammogram, respectively[3]. Even minor setbacks - whether technical, operational, or related to staff training - can quickly eat into these savings. Additionally, the digital divide poses another challenge, as communities without high-speed internet access are often unable to benefit from AI-enabled telehealth and remote monitoring services[8].

One potential solution to these integration challenges is adopting unified protocols like BondMCP - Health Model Context Protocol (https://bondmcp.com). This approach can simplify interoperability, reduce technical barriers, and help ensure that the cost-saving potential of AI systems is fully realized.

sbb-itb-f5765c6

2. AI Health Systems That Focus on Ethical Standards

Equity and Accessibility

Ethical AI systems prioritize fairness and transparency, addressing gaps that can lead to unequal care. For example, dermatological AI tools trained predominantly on lighter skin tones often fail to accurately diagnose conditions on darker skin tones, highlighting a major disparity in care [2][5]. A striking study using the HEAL framework revealed that AI systems for non-cancer dermatologic conditions in older adults had a 0% performance rate, underscoring the importance of embedding ethical AI in clinical decisions from the start [4].

To tackle these issues, diverse datasets from underrepresented populations must be included during development [5]. While the U.S. FDA has approved over 1,200 AI and machine learning-enabled medical devices as of July 2025 [5], approval alone doesn’t ensure fairness. Continuous test-time auditing is critical to flag and address biases that might make predictions unreliable for specific groups [2].

Long-Term Outcomes

Ethical AI systems not only promote fairness but also support sustainable, value-based care models. These systems often require higher upfront investment but can yield significant returns - up to 10× higher reimbursements compared to traditional Fee-for-Service models, provided they meet key population health benchmarks [4]. With around 75% of large healthcare organizations planning to adopt more AI tools by 2025 [10], trust in these systems remains a vital concern. One major challenge is "dataset shift", where models lose accuracy as clinical practices or patient demographics evolve. To maintain long-term safety, ongoing monitoring and adaptive learning are essential [2].

"The implementation of AI in healthcare requires not just technical competence but also 'value flexibility' - the ability to navigate between algorithmic recommendations and human values in clinical decision-making." - McDougall [1]

Integration and Interoperability

One of the biggest hurdles for ethical AI systems is balancing the "black box" nature of deep learning models with the transparency clinicians need to trust them [5][6]. Explainability is vital - not only to build trust but also to ease the cognitive load on physicians who must decide whether to rely on or override algorithmic recommendations. This added pressure can contribute to burnout [9].

Effective integration calls for human-in-the-loop protocols, especially in high-stakes fields like oncology or surgery, to ensure accountability remains with medical professionals [5]. Tools like BondMCP - Health Model Context Protocol (https://bondmcp.com) provide a framework for bridging ethical principles with practical interoperability by using shared context layers and health-specific ontologies. This approach relies on contextual data fusion to ensure that diverse data streams are integrated without losing clinical nuance. Transparent AI systems, paired with continuous oversight, are essential for maintaining both ethical and clinical standards.

The way forward requires a multidisciplinary approach, involving clinicians, legal experts, ethicists, and patient advocates to oversee AI performance and its alignment with ethical principles throughout its deployment [5].

Examining the Ethics of Artificial Intelligence in Healthcare Applications

Pros and Cons

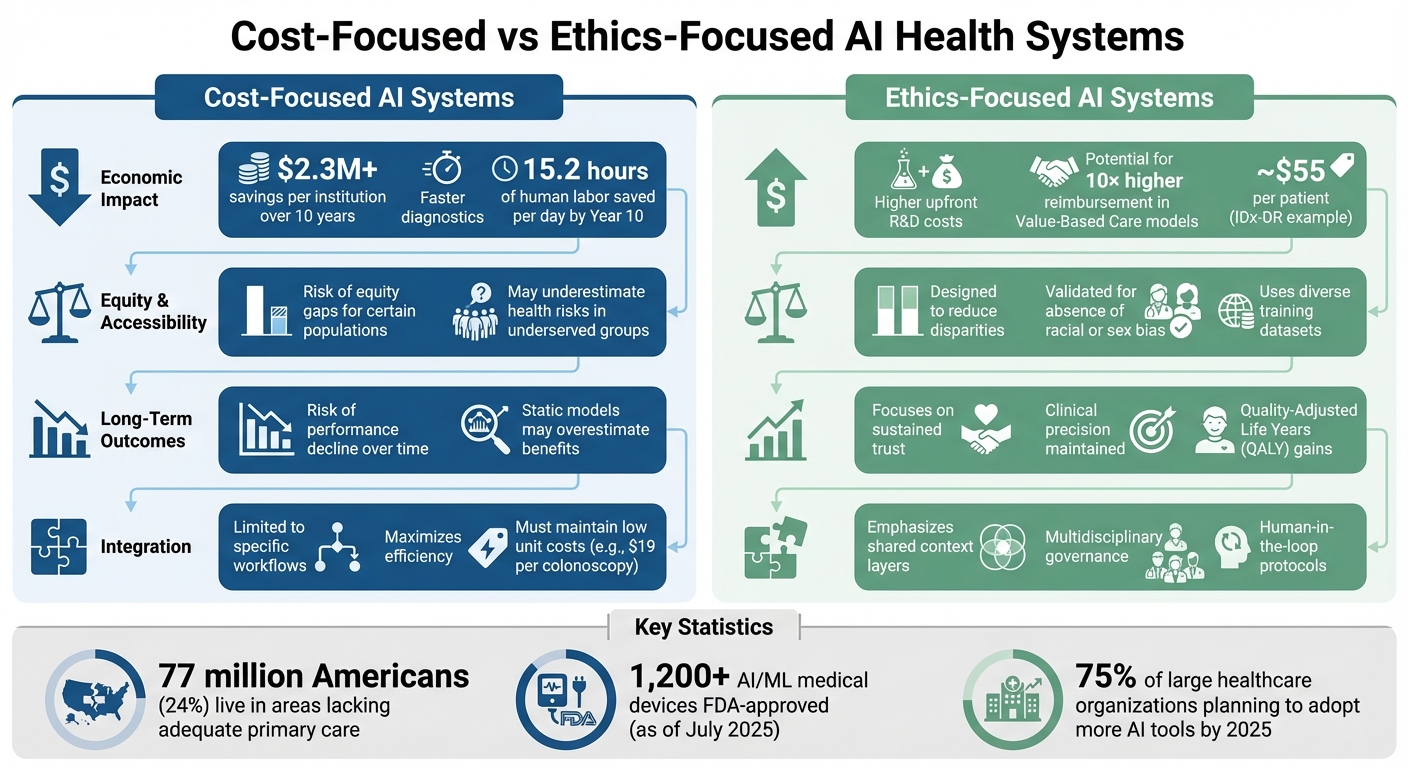

Cost-Focused vs Ethics-Focused AI Health Systems Comparison

This section highlights the key trade-offs between cost-driven and ethics-centered AI health systems, building on the earlier analysis.

Striking a balance between cost-efficiency and ethical priorities in AI health systems requires healthcare organizations to navigate distinct challenges and benefits.

Cost-focused systems are known for their financial advantages. For instance, AI applications in treatment are projected to save over $2.3 million per institution across a 10-year span [4]. These systems excel at reducing diagnostic times and cutting labor costs. However, many economic models assessing these systems rely on "static" assumptions, often ignoring real-world expenses like staff training, IT integration, and ongoing maintenance. This can lead to an overestimation of their financial benefits [4][3]. Additionally, studies suggest that cost-driven models might unintentionally marginalize certain populations, raising concerns about equity. While these systems deliver clear economic gains, they may compromise fairness and diagnostic accuracy.

On the other hand, ethics-focused systems prioritize fairness and trust, even if it means higher initial costs. These systems aim to address health disparities by using diverse training datasets and conducting rigorous bias testing. Although they require more upfront investment, they can generate up to 10× higher reimbursements through Value-Based Care (VBC) models that align with population health goals [4][7]. A prime example of this approach is Digital Diagnostics' IDx-DR system, which received FDA authorization in April 2018. The system demonstrated no racial, ethnic, or sex-based bias in its diagnostic sensitivity during testing [11][7]. By charging approximately $55 per patient, it ensures accessibility in primary care settings while maintaining its ethical standards. However, these systems also require continuous multidisciplinary oversight to uphold their integrity.

The table below outlines the main trade-offs:

| Criterion | Cost-Focused AI Systems | Ethics-Focused AI Systems |

|---|---|---|

| Economic Impact | $2.3M+ savings per institution over 10 years; faster diagnostics [4] | Higher upfront R&D costs; potential for 10× higher reimbursement in VBC models [4][7] |

| Equity and Accessibility | Risk of equity gaps for certain populations [4] | Designed to reduce disparities; validated for absence of racial or sex bias [7] |

| Long-Term Outcomes | Risk of performance decline over time [4] | Focuses on sustained trust, clinical precision, and QALY gains [3] |

| Integration and Interoperability | Limited to specific workflows; maximizes efficiency [4] | Emphasizes shared context layers and multidisciplinary governance [4] |

Solutions like BondMCP - Health Model Context Protocol illustrate a way to bridge these gaps by integrating shared context layers and health-specific ontologies. This approach enables systems to combine cost-effectiveness with ethical interoperability, offering a more balanced framework for AI in healthcare.

Conclusion

AI health systems that focus on cost and those that emphasize ethics each bring distinct advantages to the table. Cost-driven models can lead to notable financial savings, but they risk creating disparities and may falter in real-world applications. On the other hand, ethics-centered systems aim to build trust and promote fairness, though they often require more significant initial investments and ongoing oversight to ensure they uphold their standards.

The key to building resilient AI health systems lies in balancing these priorities. The IA2TF Framework provides a structured approach with five essential pillars: co-design, data standardization, real-world performance monitoring, ethical and regulatory integration, and multidisciplinary governance [4]. A case in point is Michael D. Abràmoff's IDx-DR system, which integrates these principles. It charges approximately $55 per patient, with CMS reimbursement rates ranging from $45 to $64 per exam [11][7].

To stay ahead, healthcare organizations should adopt flexible models that adapt to AI's evolving performance rather than relying on static assumptions [3]. Aligning AI systems with treatment optimization and Value-Based Care models can deliver significant returns, especially when these efforts are tied to improving population health outcomes [4][7].

One example of bridging the gap between cost and ethics is the BondMCP - Health Model Context Protocol. This framework uses shared context layers and health-specific ontologies to connect fragmented health data systems. By enabling interoperability, it allows AI agents to collaborate effectively, marrying financial efficiency with ethical responsibility. This transforms AI from isolated tools into cohesive systems that support both institutional goals and patient care.

The future of AI in healthcare hinges on multidisciplinary governance and ongoing validation in real-world settings [11][4]. When cost-efficiency and ethical considerations are treated as complementary forces, healthcare systems can create scalable AI solutions that uphold both financial sustainability and patient welfare.

FAQs

How can AI in healthcare balance reducing costs with maintaining ethical standards?

AI is reshaping healthcare by automating tasks, refining diagnostics, and streamlining resource use - all of which can help reduce costs for hospitals, insurers, and patients. While these efficiencies are promising, it's vital to ensure that cost-cutting measures don't compromise core ethical values like patient autonomy, privacy, fairness, and transparency. If not carefully managed, AI systems could unintentionally magnify biases, obscure decision processes, and weaken trust in healthcare systems.

To address these challenges, experts emphasize the importance of weaving ethical practices into every phase of AI development. This includes implementing bias audits, ensuring transparent reporting, and maintaining strong data stewardship. Another key strategy is creating reimbursement models that align financial incentives with both clinical outcomes and ethical standards. This dual focus helps ensure AI solutions not only reduce expenses but also maintain the fairness, safety, and accountability that are critical for trustworthy healthcare.

What are the risks of bias in AI healthcare systems, and how can we address them?

AI healthcare systems can unintentionally deepen existing inequalities when built on biased data or flawed assumptions. These biases can stem from several areas, including training data that fails to represent certain racial, ethnic, gender, or socioeconomic groups, algorithm designs that cater to majority populations, or deployment settings that differ significantly from the environments where the AI was originally developed - resulting in performance disparities.

Addressing these challenges requires a proactive approach. Some effective strategies include:

- Incorporating diverse, high-quality training datasets that accurately represent the U.S. population.

- Performing fairness audits and analyzing how the model performs across various subgroups.

- Offering transparent documentation, such as model cards, to clearly outline limitations.

- Continuously monitoring AI performance post-deployment to identify and correct biases.

- Engaging a wide range of stakeholders - patients, clinicians, and ethicists - to guide decisions and ensure inclusivity.

One promising approach comes from BondMCP’s Health Model Context Protocol. This protocol integrates data from wearables, lab tests, and clinical records across a wide range of demographics. By creating a more complete and representative knowledge base, it helps AI systems make fairer, more ethical, and cost-efficient health decisions.

Why is interoperability crucial for advancing AI in healthcare?

Interoperability plays a crucial role in applying AI to healthcare. It ensures that data from different sources - such as medical records, wearable devices, lab results, and imaging - can connect and function as a unified system. When these systems communicate effectively, AI gains access to richer, more diverse datasets, enabling it to deliver precise predictions and tailored care solutions.

On the flip side, a lack of interoperability traps data in isolated silos, limiting the potential of AI models, slowing progress, and driving up costs. By bringing together fragmented information into a cohesive flow, platforms like BondMCP allow AI to offer more precise and personalized guidance. This integration not only boosts efficiency but also tackles economic and ethical challenges in the healthcare landscape.