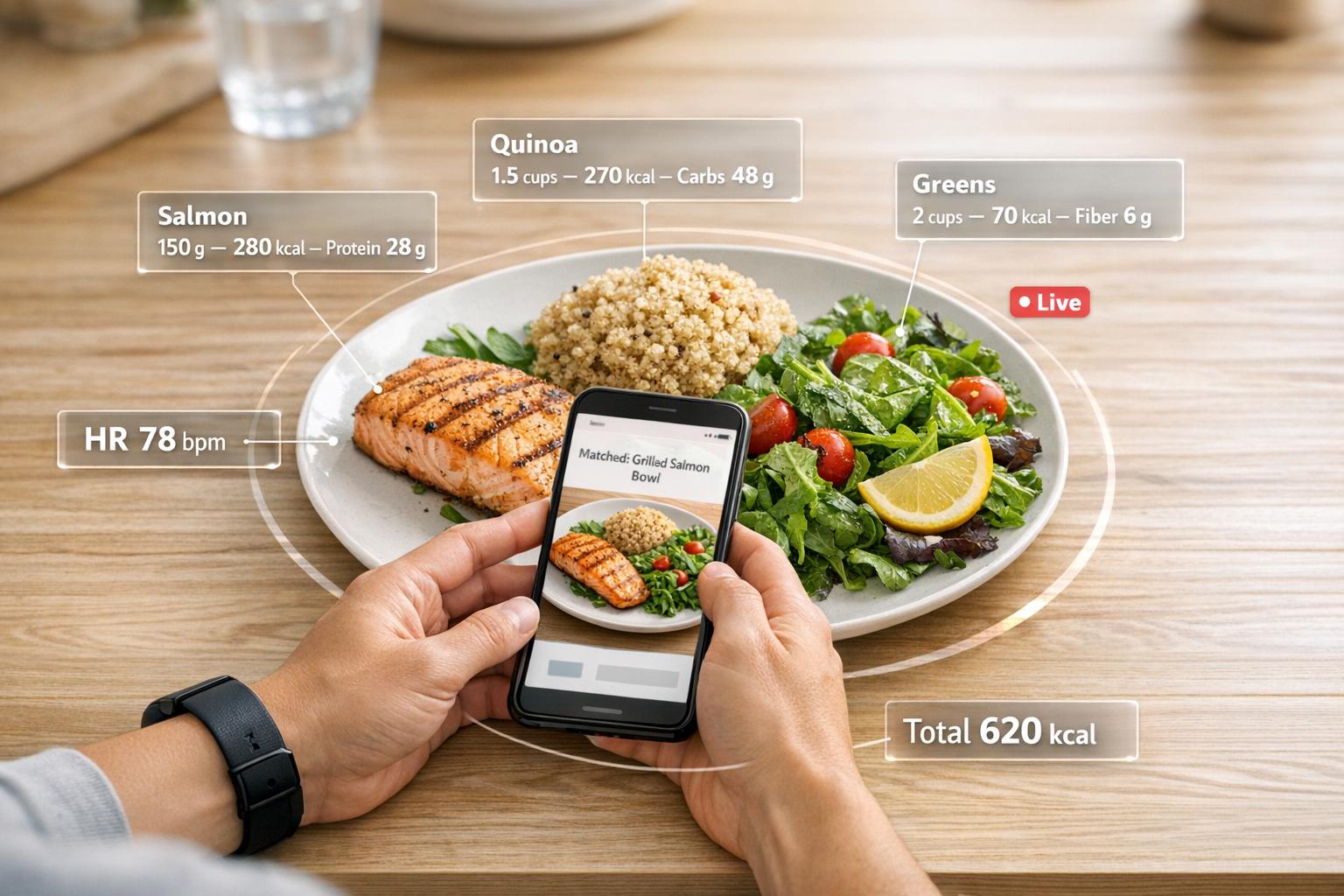

AI is transforming how we monitor food and nutrition. Instead of manually logging meals, systems now use photos, voice inputs, and wearables to analyze what you eat in seconds. Tools like PlateLens can identify foods, estimate portions, and calculate calories with a precision error margin of just ±1.2%. This level of accuracy helps users maintain AI nutrition for fitness goals and avoid common tracking errors. Real-time feedback creates awareness, improving eating habits and long-term health outcomes.

Key advancements include:

- Photo-based tracking: Snap a meal photo, and AI estimates calories and nutrients quickly.

- Wearable devices: Track eating behaviors like chewing and portion sizes using sensors.

- Food databases: Match foods to trusted sources like USDA for detailed nutrient profiles.

- Real-time insights: AI coaches provide instant feedback and personalized advice.

Despite challenges like hidden ingredients and portion estimation, these systems outperform manual methods, making nutrition tracking faster, easier, and more reliable.

What AI Photo Calorie Tracker Is Most Accurate? (CalAI vs. Snap Calorie vs. MORE)

sbb-itb-f5765c6

AI-Powered Image-Based Food Recognition

How AI Food Recognition Works: From Photo to Nutritional Data

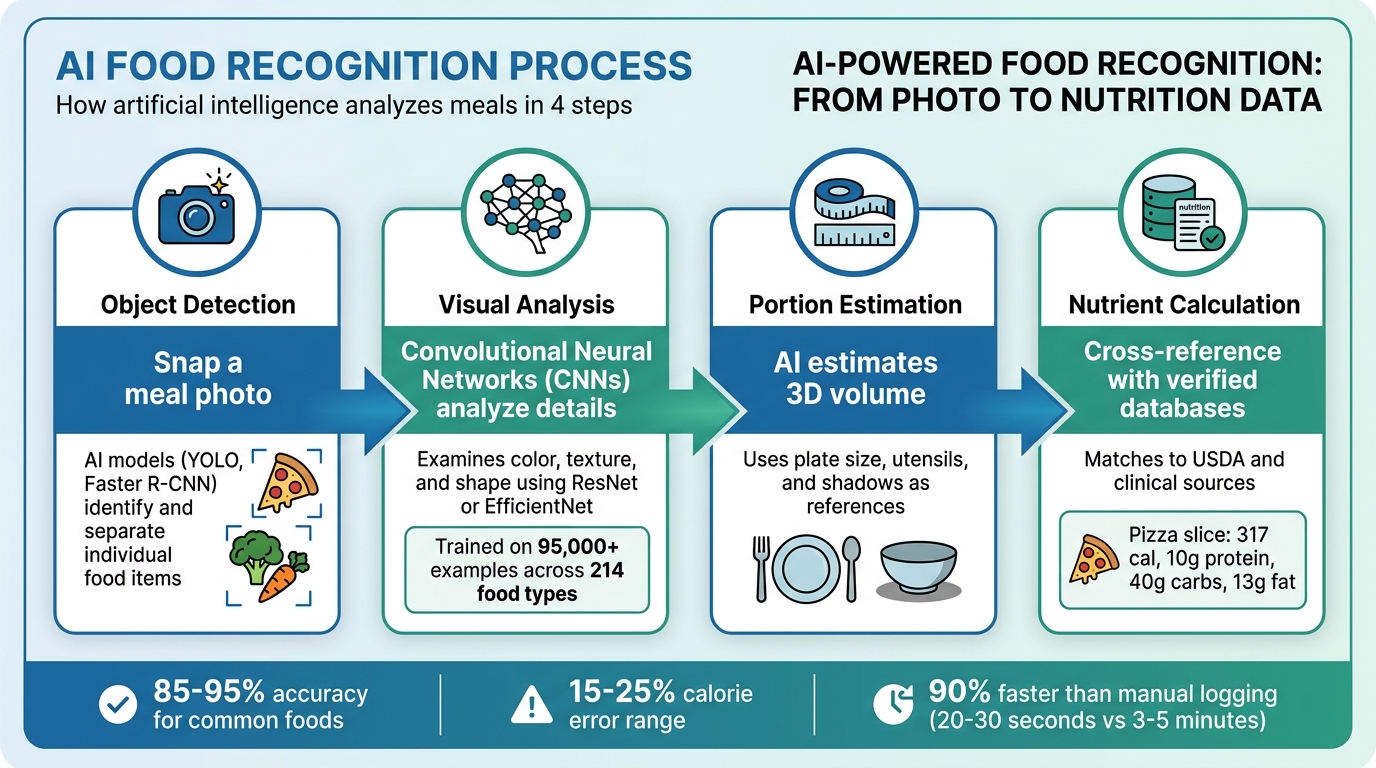

How Image Recognition Works

When you snap a photo of your meal, AI kicks into gear with a series of steps. It starts with object detection, using models like YOLO (You Only Look Once) or Faster R-CNN to pinpoint and separate individual food items [4][6].

Then, Convolutional Neural Networks (CNNs) step in to examine each item's visual details - think color, texture, and shape. These networks, often built on platforms like ResNet or EfficientNet, compare your food image against massive databases containing thousands of food categories [4][6]. For example, researchers at NYU Tandon School of Engineering developed a system in March 2025 that was trained on 95,000 examples across 214 food types. When tested, it calculated the nutritional content of a pizza slice - 317 calories, 10g protein, 40g carbs, and 13g fat - closely matching standard references. It also successfully analyzed more complex dishes like idli sambhar (221 calories) and baklava (310 calories) [7].

A standout feature is portion estimation. AI uses references, like the size of a plate or utensils, and even analyzes shadows to estimate the 3D volume of food items. Once the portion size is determined, the data is cross-referenced with verified clinical sources for precise nutritional information [4][6][7].

"Traditional methods of tracking food intake rely heavily on self-reporting, which is notoriously unreliable. Our system removes human error from the equation." - Prabodh Panindre, Associate Research Professor, NYU Tandon School of Engineering [7]

These advanced techniques work seamlessly with wearable devices, making meal tracking faster and more intuitive than ever.

Benefits of Photo-Based Tracking

Photo-based tracking, powered by these advanced recognition systems, is a game-changer for meal logging. It cuts the time spent logging meals by 90%, reducing it from 3 to 5 minutes to just 20 to 30 seconds [6]. AI food recognition models also boast an impressive accuracy rate of 85% to 95% for common foods, with calorie estimates typically within a 15% to 25% error range. Compare that to manual self-reporting, where people often underestimate their intake by 20% to 50% [4][6].

This combination of speed and accuracy makes sticking with tracking much easier over time. To get the best results, take photos from a top-down or 45° angle in natural light, ensuring that each food item is clearly separated [4][6]. However, keep in mind that AI can't identify "hidden" calories, such as those from cooking oils, butter, or dressings - you'll need to add those manually after the analysis [4][5].

Wearable Devices for Monitoring Eating Behavior

Wearable technology has taken AI-based dietary tracking to the next level by focusing on the physical process of eating itself.

Chew and Swallow Detection

These devices now monitor not just what you eat but how you eat. For example, wrist devices like iEat use bio-impedance sensors to measure conductivity changes during hand-to-mouth actions, identifying activities such as cutting food or drinking water [8].

Smartwatches and smart glasses, equipped with inertial measurement units (IMUs), track hand-to-mouth movements through accelerometers and gyroscopes. Smart glasses go a step further, detecting chewing vibrations and head movements [10]. Another innovative device, the necklace-style NeckSense, uses proximity and ambient light sensors to measure changes in chin-to-chest distance while chewing [11].

These systems have shown impressive accuracy. For instance, the CuisineSense system can distinguish eating from non-eating behaviors with an accuracy of 97.94% [10]. Similarly, bio-impedance wearables achieve an F1 score of 86.4% when identifying four different eating activities [8]. AI models have also been able to estimate bite weight with a mean absolute error as low as 3.99 grams [9]. Moreover, some devices, like dietary monitoring necklaces, offer up to 15.8 hours of continuous battery life, ensuring they can track eating behaviors throughout the day [11].

Integration with AI for Real-Time Insights

Wearables rely on a two-stage AI system for real-time analysis. The first stage, "Eating State Detection", filters out non-eating motions, while the second stage, "Food Type Recognition", classifies eating behaviors. This process is remarkably fast, analyzing data in just 2.16 milliseconds per 10-second interval [10].

The AI doesn’t stop there - it identifies patterns in eating habits, offering immediate feedback on metrics such as meal duration, eating speed, and portion size. For example, the iEat system can count the number of food items consumed with an average error rate of only 11.48% [8]. When multiple devices, like smartwatches and smart glasses, are used together, the combined data provides a detailed view of hand movements and jaw activity, improving reliability [10].

These insights are often linked to platforms like Healify, which consolidate the data into actionable feedback. By integrating wearable data with AI health coaching systems, users gain precise, real-time nutritional guidance during meals, completing the loop of advanced dietary monitoring.

Nutrient Analysis Using Food Databases

Once AI systems identify foods through image recognition and monitor eating behaviors, they tap into extensive food databases to convert visual information into detailed nutrient profiles. This step bridges the gap between recognizing a dish and understanding its nutritional content.

How Nutrient Estimation Works

The process starts by matching identified foods - like a grilled chicken breast or a side of broccoli - to entries in trusted databases such as USDA FoodData Central or the NCC Food and Nutrient Database. These databases provide standardized data on macronutrients and micronutrients for the recognized items [12][13].

To ensure accuracy, advanced AI platforms use convolutional neural networks for precise food matching and automated depth estimation algorithms to measure portion sizes. Portion estimation is often the trickiest part of dietary tracking, but these tools help minimize errors [3]. With this foundation, AI taps into vast nutritional databases for reliable insights.

Modern platforms, such as Passio's nutrition intelligence system - which manages 3.5 million structured food entries and processes over 100 million API requests annually - and the NCC Food and Nutrient Database, which includes approximately 19,500 foods with data on up to 178 nutrients, provide robust infrastructure for nutrient analysis. These resources ensure that the insights delivered are accurate and practical, supporting the goal of personalized nutrition [12][14].

Accuracy and Limitations

While AI-driven nutrient tracking has made impressive strides, it’s not without challenges. One major issue is hidden ingredients. Even with highly accurate systems, factors like concealed components and regional recipe variations can complicate nutrient estimates. Stephen M. Walker II, Product Leader at Fuel Nutrition, highlights this challenge:

"Most users do not lose progress because a model confused salmon with chicken. They lose progress because the system logged a plausible draft that nobody checked" [15].

Regional dishes and homemade meals further complicate tracking. For instance, a beef pho may be overestimated by up to 49% due to noodles hidden under the broth, while bubble tea might be underestimated by as much as 76% because sugar and tapioca pearls are concealed within the drink [15]. Historically, many AI models have leaned toward Western meal patterns, but newer systems are expanding their datasets to include a broader range of global cuisines [15][4].

The quality of the database also plays a critical role. Sergey Oreshko, Co-founder and CEO of MyNetDiary, points out that databases with millions of unverified entries can create confusion by overwhelming users with duplicates, outdated information, or unchecked data [14]. Verified databases, which rely on lab-analyzed data, tend to be more reliable. In contrast, crowdsourced databases often introduce biases, such as underestimating protein intake by about 7.8% and carbohydrates by 6.4% [14].

Despite these hurdles, AI-powered tracking far surpasses manual methods. For example, PlateLens achieves a ±1.2% Mean Absolute Percentage Error (MAPE), equating to an error margin of just ±24 calories on a 500-calorie daily deficit [3]. This level of precision represents a significant improvement over manual tracking and highlights how AI automates macro tracking and nutritional analysis. These advancements enable platforms like Healify to deliver real-time, tailored nutritional insights, completing the journey from food identification to actionable recommendations.

How Healify Integrates AI Nutritional Tracking

Healify takes the latest in image recognition and wearable technology and combines them into a seamless platform for personalized nutrition tracking. It integrates AI-powered food recognition, data from wearables, and tailored coaching to provide a complete picture of your health. Using its SNAP technology, Healify identifies meals through photos, estimating calories, protein, fats, carbs, and fiber with over 90% accuracy - even for a wide range of cuisines [17]. The Auto Snap feature simplifies the process further by automatically scanning your photo gallery to log meals [16].

The platform also syncs with Apple Health, blending nutrition data with real-time activity metrics from devices like Apple Watch, Fitbit, Garmin, and Samsung wearables. This ensures that nutritional recommendations align with your actual activity levels.

Features of Healify's Nutritional Tracking

Healify goes beyond basic data collection with an extensive food database and advanced analytics. It tracks a variety of foods, from classic American dishes to regional specialties [16]. Simultaneously, it analyzes several health metrics, including daily calorie intake, macronutrients (protein, fats, carbs), fiber, hydration levels, weight, BMI, and metabolic indicators through Continuous Glucose Monitor integration [18][19].

In 2024, Healify incorporated GPT-4 Vision into its Snap feature, increasing food tracking engagement by 50% and improving the reliability of meal logging. Voice logging adds another layer by capturing hidden ingredients like oils or dressings [17][18]. The system also evaluates meals and tailors recommendations based on your progress and activity.

Real-Time Insights via Healify's AI Coach

Healify’s AI health coach, Anna, provides 24/7 support by analyzing food logs and wearable data. Anna offers instant feedback, recipe ideas, grocery lists, and motivational advice [16]. You can set goals using natural language - such as “I want to lose 10 pounds in 2 months” - and Anna will create a realistic plan and adjust her suggestions accordingly [20].

"Ria [Anna] can educate you, give you tips and converse with you like an actual human coach would." – Healthify [16]

Anna’s capabilities extend beyond tracking. She can create personalized meal plans, suggest restaurants, and even provide at-home workout or yoga routines upon request [16]. Supporting 11 languages, Healify caters to a global audience [17]. With over 40 million users, the platform has helped individuals collectively lose more than 25 million pounds. Studies show that combining AI with human coaching leads to 70% greater weight loss compared to AI coaching alone [18].

Challenges and Future of AI Nutritional Tracking

Overcoming Data Accuracy Challenges

AI-driven nutrition tracking has come a long way, but it’s not without its hurdles. One of the biggest challenges is accounting for hidden ingredients. Items like cooking oils, butter, sauces, and dressings can add an extra 100–300 calories to a meal without visibly altering its appearance [4][21]. For instance, a 2024 study revealed that AI underestimated the calorie content of bubble tea by 76% and overestimated beef pho by 49% due to hidden ingredients and the difficulty of estimating portions in broth-based dishes [15].

Flat 2D photos and inconsistent food densities further complicate portion estimation, especially for layered or blended meals like casseroles, stews, or smoothies. Once ingredients are mixed or blended, identifying them individually becomes nearly impossible for AI systems [4][21].

The solution? Combining multiple data sources. Users can add quick text notes like “cooked in 1 tbsp olive oil” to supplement photos, helping AI account for what the camera misses [15]. Smartphones equipped with LiDAR can capture precise 3D models of meals, improving portion accuracy [4]. Additionally, a "human-in-the-loop" approach - where users review and correct AI-generated logs - helps refine the system over time [4][15].

"The hard part is not food recognition. The hard part is estimating what the camera cannot see, what the voice prompt did not specify, and what the database entry quietly got wrong."

– Stephen M. Walker II, Product Leader, Fuel Nutrition [15]

Addressing these challenges is essential as AI nutrition tracking evolves toward more accurate and personalized solutions.

The Future of Personalized Nutrition

The future of personalized nutrition is moving far beyond basic calorie counting. Platforms like Healify are already pushing boundaries to refine tracking capabilities. Precision nutrition is on the rise, with AI integrating dietary data with insights from genetics, gut microbiome analysis, and continuous glucose monitoring to better understand how each individual processes nutrients [22]. Soon, systems may even predict your blood sugar response to a specific meal based on your unique metabolic profile [23].

Advancements like video-based tracking, which uses multi-angle shots to create 3D meal models, are set to improve portion accuracy significantly [4]. Cutting-edge technologies such as metagenome-informed metaproteomics and DNA metabarcoding (FoodSeq) are paving the way for molecular-level analysis of nutrient digestion and absorption [22]. In the future, AI might even measure how effectively your body absorbs nutrients and detect nutritional deficiencies.

Another key development will be explainable AI, which can enhance user trust. Imagine an app explaining why it made a specific recommendation, such as "protein intake increased because you're 15 grams below your target" [23]. When combined with real-time wearable data on metrics like chewing, heart rate, and sleep, AI could provide a full picture of how your diet impacts your overall health [23][1].

"We are at the beginning of a new era where nutrition advice can be timely, personalized, and experimentally validated."

– Alex Morgan, Senior Editor & Nutrition Product Strategist [23]

Conclusion and Key Takeaways

AI's Impact on Health and Wellness

AI has reshaped the way we monitor our diets. Instead of tediously searching food databases or estimating portion sizes, you can now log meals in seconds using a photo or voice command [17]. The accuracy of these systems is a major leap forward - AI consistently outperforms traditional manual tracking methods [3]. But this isn't just about saving time. AI-based tracking has been linked to a 14% average reduction in body mass over six months and 23% better adherence to nutritional goals [17].

What makes this truly transformative is AI's ability to turn raw data into personalized, actionable advice. Instead of just presenting numbers, these systems provide real-time feedback, like whether you're hitting your protein goals, staying in a calorie deficit, or consuming too much sodium. For example, users have reported a 31% decrease in high-sodium meal consumption when AI alerts are activated. By reducing errors and simplifying tracking, AI removes the mental strain that often causes people to give up on diet apps [1][2].

"AI-powered image recognition with curated food databases demonstrates substantially superior calorie tracking accuracy... with clinical implications for weight management and chronic disease monitoring."

– Hayes J, Santos M, Chen D, Nutrition Research Review [3]

These advancements set the stage for Healify's comprehensive approach to personalized nutrition.

Why Healify is a Game-Changer

Healify takes these AI-driven innovations to the next level by integrating them with your complete health profile. Instead of treating food logs as isolated data points, Healify’s AI coach, Anna, combines your dietary data with information from wearables, biometrics, blood tests, and lifestyle habits. The result? 24/7 personalized guidance tailored to your unique health needs.

This isn’t just about counting calories - it’s about understanding how your meals impact your energy, sleep, stress levels, and overall well-being. Healify simplifies complex health data into actionable steps, providing instant feedback on what to eat and when. Whether you're managing a chronic condition, improving athletic performance, or just aiming to feel better, Healify delivers insights that used to require expensive consultations with specialists - all conveniently accessible on your iPhone.

FAQs

How does AI estimate portion size from a single photo?

AI leverages computer vision to examine visual elements such as volume, plate coverage, and food density. By recognizing individual food items and their arrangement on a plate, it estimates portion sizes and nutritional values. This technology allows for precise, real-time monitoring of your dietary intake.

How can I log oils, sauces, and other hidden ingredients accurately?

To track oils, sauces, and hidden ingredients effectively, try using AI-powered food recognition tools. These tools can analyze photos or labels to estimate the nutritional content of your meals. Here's how it works:

- Take a clear photo: Snap a picture of your meal, ensuring the entire plate and any packaging are visible.

- Let AI do the work: The tool will identify all ingredients, even small amounts like oils or sauces.

- Double-check and adjust: Review the AI's estimates and make any necessary adjustments for accuracy.

This approach helps you create detailed and accurate food logs with minimal effort.

What wearable signals can AI use to detect eating in real time?

AI has the ability to identify eating in real-time by interpreting data from wearable devices. These wearables gather various signals, such as bio-impedance, inertial motion data, wrist movements, and physiological metrics like heart rate variability and continuous glucose levels. By analyzing these signals, AI can uncover patterns and behaviors linked to food consumption.