Deep learning is transforming healthcare by predicting treatment outcomes using patient data like electronic health records, lab results, and wearable device metrics. Unlike traditional methods, it identifies complex patterns in patient health to forecast recovery, complications, or treatment success. These predictions enable personalized care, timely interventions, and optimized resource use. However, challenges like fragmented data, biases, and interpretability issues remain. Advanced models like RNNs, transformers, and graph neural networks are being used to process sequential and multimodal data, offering detailed insights into patient outcomes. Tools like BondMCP simplify data integration, enabling real-time predictions and actionable recommendations, while ethical and regulatory measures ensure safe deployment.

From Data to Prediction: A Multimodal AI Framework for Treatment Response in Cance

Main Deep Learning Methods for Outcome Prediction

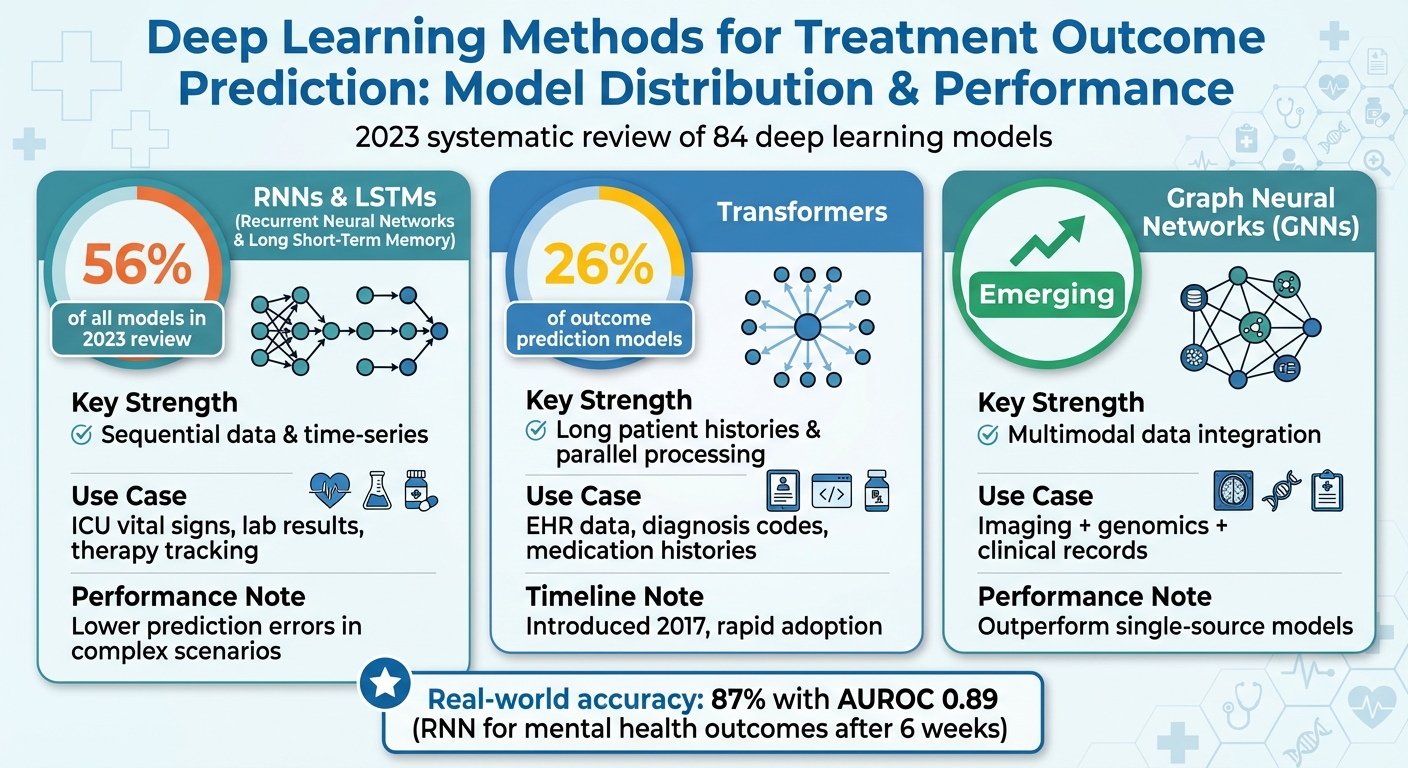

Deep Learning Model Distribution and Performance in Healthcare Outcome Prediction

Deep learning has opened up new possibilities for predicting treatment outcomes, thanks to its ability to handle complex and dynamic patient data. Several architectures stand out for their effectiveness in this area, each tailored to specific data types and challenges.

Recurrent Neural Networks (RNNs) and LSTMs

Recurrent Neural Networks (RNNs) and their advanced counterpart, Long Short-Term Memory (LSTM) networks, are particularly suited for sequential data. These models excel at capturing patterns over time, making them ideal for tracking changes in a patient’s condition - whether it’s hourly ICU vital signs, weekly lab results, or therapy session symptom scores.

A key advantage of LSTMs over standard RNNs is their ability to retain long-term dependencies through specialized gate mechanisms. This feature addresses the common "forgetting" problem in RNNs, enabling LSTMs to analyze extended sequences of health data effectively. For instance, MIT's G-Net model leveraged this capability to predict counterfactual outcomes with notable accuracy [2]. LSTMs consistently achieve lower prediction errors in complex medical scenarios [2].

In a 2023 review examining 84 deep learning models for sequential diagnosis codes, RNNs and LSTMs accounted for 56% of all models [4]. Their widespread use highlights their natural fit for time-ordered medical records. Building on this foundation, transformers offer a newer alternative by processing sequences in a fundamentally different way.

Transformer Models in Healthcare

Transformers, introduced in 2017, have quickly become a powerful tool in healthcare. Unlike RNNs, which process data step by step, transformers utilize self-attention mechanisms to analyze entire sequences simultaneously. This allows them to identify the most relevant past events - such as a diagnosis, medication adjustment, or hospitalization - even if these events occurred months or years earlier.

Despite being relatively new, transformers already accounted for 26% of outcome prediction models in the same 2023 systematic review [4]. Their popularity stems from their ability to handle large datasets and long patient histories effectively. Transformers are particularly adept at analyzing longitudinal electronic health record data, including diagnosis codes (ICD-9/10), procedure codes, and medication histories, to predict outcomes like mortality, hospitalization risk, or disease progression.

Researchers have found that incorporating time-aware features - such as the intervals between clinic visits or the time since a treatment change - enhances transformer performance. For example, distinguishing between a patient who missed one appointment and another who disengaged from care for months can significantly impact predictions. When relationships between data points become critical, Graph Neural Networks (GNNs) offer an alternative approach.

Graph Neural Networks (GNNs) and Multimodal Models

Graph Neural Networks (GNNs) take a unique approach by structuring health data as networks of interconnected entities. In these graphs, nodes can represent patients, medications, diagnoses, or lab results, while edges capture the relationships between them. This setup is especially effective for integrating diverse data types, such as imaging scans, genomic profiles, lab results, and clinical notes, into a cohesive prediction.

By propagating information across the graph, GNNs can combine inputs in a way that mirrors real-world clinical relationships. For example, a GNN might connect tumor imaging features with lab markers of immune function and treatment history to predict cancer outcomes. Although GNNs were less common than RNNs or transformers in the 2023 review, they are increasingly used when the relationships between data elements are as important as the data itself [4].

Multimodal models, which combine different data types, often outperform models relying on a single source. This is especially true in fields like oncology, critical care, and mental health [4][6][5]. For instance, a hybrid model might use convolutional neural networks (CNNs) to analyze medical images, transformers or RNNs for sequential clinical data, and fusion layers to integrate these insights before making predictions. Such approaches reflect the reality that patient outcomes are influenced by a complex interplay of factors, which no single data source can fully capture.

The choice of method depends on the structure of your data and your specific goals. For time-series data like vital signs and treatment timelines, LSTMs are a reliable choice. When dealing with long electronic health record histories, transformers offer scalability and interpretability through attention mechanisms. For scenarios requiring the integration of imaging, molecular data, and clinical records, multimodal architectures with GNN components provide the most comprehensive solution.

Data Processing and Model Evaluation Methods

Creating reliable models begins with defining a patient cohort. This involves using ICD codes, procedure codes, and specific timeframes to identify relevant populations. Data integration from sources like EHRs, claims, wearables, and patient reports is also essential [4]. Another critical step is selecting an index time - such as the start of treatment, a surgery date, or ICU admission - and establishing a look-back period to collect the necessary historical data [4][3].

Building Data Pipelines for Longitudinal Health Data

When working with longitudinal health data, aligning events over time is crucial. Since patient visits often occur irregularly, events must be organized into fixed intervals, like daily or weekly summaries. This approach allows models like RNNs and transformers to process the data effectively [4][3].

Feature engineering plays a significant role here. For instance, creating code embeddings can highlight clinical relationships, while encoding time intervals between events and summarizing visit-level data (using metrics like minimum, maximum, and mean values) provides a structured view of patient histories. Handling missing data is another challenge. Strategies like mask vectors, time-gap encoding, and domain-specific imputation methods (e.g., carrying forward the last observation) are often employed to address this [4][3].

To streamline this process, tools like BondMCP – Health Model Context Protocol offer a standardized way to integrate fragmented data streams. This includes data from wearables, lab results, medications, sleep tracking, and fitness devices, combining them into a unified temporal framework. By doing so, the need for custom extraction and transformation for every new project is significantly reduced.

Evaluation Metrics and Validation Techniques

Once the model is trained, its performance must be assessed using clinically relevant metrics. For example, AUROC is used to measure discrimination, while AUPRC is particularly helpful for rare events [3]. Calibration plots and Brier scores are also important, as they show how well predicted risks (e.g., a 20% chance of relapse) match observed outcomes [4][3].

A review of 84 deep learning models analyzing sequential diagnosis codes revealed that incorporating diverse features, explicitly modeling time intervals, and using larger datasets consistently improved results [4].

Validation is another critical step and should go beyond the data from the training institution. Temporal validation - training on earlier data and testing on later data - mimics real-world scenarios. External validation, on the other hand, uses data from different health systems, states, or payer mixes to ensure the model performs well across various coding practices and patient demographics [4]. Subgroup analyses, focusing on factors like age, sex, race/ethnicity, insurance type, and comorbidity burden, can further identify potential disparities in performance before deploying a model in U.S. clinical settings [4][3].

For example, in a study involving internet-delivered cognitive behavioral therapy, deep RNN models demonstrated over 87% accuracy and an AUROC of approximately 0.89 after just three review periods (around six weeks). This gave clinicians ample time to adjust care before treatment concluded [3]. These evaluation methods lay the groundwork for causal modeling, which predicts outcomes under different treatment scenarios.

Causal Modeling for Counterfactual Outcomes

Beyond risk prediction, models must estimate outcomes under alternative treatment strategies. G‑computation is one such method, simulating outcomes under hypothetical interventions, such as switching from one treatment to another at a specific point in time [2].

A notable advancement in this area is G‑Net, described as the first deep-learning approach using g‑computation to predict both population-level and individual-level treatment effects in clinical settings. By combining LSTM architectures with g‑computation, G‑Net effectively handles time-varying confounding [2].

For example, in simulated ICU datasets with 66 time steps and approximately 1,000 patient trajectories per scenario, G‑Net produced counterfactual predictions closely aligned with ground truth. Its performance surpassed that of linear models, multilayer perceptrons, recurrent marginal structural networks, and CRN, with smaller errors [2].

These causal models go beyond standard performance metrics by offering scenario-based predictions. For instance, they can answer questions like, "What happens if therapy is escalated at time t versus if it isn't?" This capability allows clinicians to maintain their expertise while quantitatively estimating the outcomes of different treatment strategies [2].

sbb-itb-f5765c6

Trust, Interpretability, and Clinical Integration

Even the most accurate deep learning model will fall short if clinicians don’t trust its predictions or if it disrupts their workflow. A 2023 review of 84 deep learning models for sequential diagnosis codes revealed that 56% relied on RNNs and 26% used transformers, yet many faced challenges in adoption because they functioned as "black boxes" [4]. What builds trust? Predictive accuracy, reliability across diverse populations, interpretability, and compatibility with clinical workflows [4][3]. Clinicians are particularly interested in seeing standard metrics validated across diverse American populations, including groups defined by race, ethnicity, and insurance status [4].

Making Deep Learning Models Interpretable

Attention mechanisms in RNNs and transformers can help pinpoint which visits, diagnoses, or time intervals influenced a prediction. This creates a timeline view where high-attention encounters are flagged [4]. Similarly, saliency maps, adapted from imaging models, can highlight which lab results, vital signs, or clinical notes most impacted the risk prediction. These can be presented as simple feature-importance bars tied to the current encounter [4]. For more complex models, SHAP and LIME provide explanations by approximating the contributions of individual features, making them especially useful for large transformers or ensemble models. Another key tool is uncertainty estimation, which uses techniques like Monte Carlo dropout, deep ensembles, or calibrated probability outputs to flag low-confidence predictions, prompting mandatory human review [2][3].

For clinicians, these interpretability tools should be integrated into user-friendly interfaces. Examples include AI tools for patient-centered treatment plans that feature ranked lists of "top contributors", heatmaps of patient timelines, or risk predictions with confidence bands. The goal is to avoid overwhelming users with raw data or complex mathematical explanations. These techniques lay the groundwork for smooth integration into clinical workflows.

Integrating Predictions into Clinical Workflows

Successful integration involves three main components: data pipelines, prediction services, and front-end design. Models rely on data from HL7 v2, FHIR APIs, or harmonized data warehouses. A real-time or near-real-time inference service - often a containerized model with REST or FHIR endpoints - processes this data and delivers risk scores or recommendations. Within EHR systems like Epic or Cerner, these predictions are displayed using familiar tools such as Best Practice Advisory alerts, risk flags in problem lists, or embedded dashboards within order sets. The aim is to minimize context-switching by avoiding standalone apps.

To reduce alert fatigue, thresholds can be fine-tuned by clinicians, and smart suppression rules (e.g., limiting alerts to one per admission for high-risk patients) can be implemented. Governance structures, such as clinical decision support committees and IT change control boards, play a critical role by reviewing logs of prediction usage, overrides, and outcomes. This allows for ongoing refinement of rules. For integrated systems like BondMCP, bidirectional EHR integration using FHIR enables data from outpatient visits, home monitoring, and wellness programs to inform inpatient or ambulatory risk models, all while maintaining the EHR as the legal medical record.

Ethical and Regulatory Considerations

Technical performance and workflow integration aren’t enough - ethical and regulatory standards must also guide how models are deployed. HIPAA enforces strict rules for handling PHI, affecting where models are trained (on-premises vs. cloud), how identifiers are managed, and how data from consumer devices or apps is linked to clinical records [4]. Many predictive tools qualify as Software as a Medical Device (SaMD) under FDA oversight. Depending on their purpose - whether aiding diagnosis or risk stratification - they may require 510(k) clearance, De Novo classification, or premarket approval, along with clinical validation.

Ethical challenges include algorithmic bias (models trained on unbalanced datasets may perform poorly for minority racial/ethnic groups, rural populations, or uninsured patients), automation bias (over-reliance on model outputs despite uncertainty), and reinforcement of flawed practices (models inheriting historical under-treatment or over-treatment patterns embedded in U.S. claims or EHR data) [4][3]. Addressing these issues involves several safeguards: reporting performance metrics by race, ethnicity, sex, age, insurance type, and geography; conducting fairness audits and adjusting training data; clearly labeling the model’s role (assistive vs. prescriptive) and displaying uncertainty; and training users on how to interpret and override predictions appropriately.

Health systems can establish independent ethics or AI governance boards to oversee new models, monitor for harm or disparities, and mandate retraining or decommissioning of models that degrade over time or exacerbate inequities. These measures ensure that models not only perform well technically but also align with ethical and regulatory standards.

How BondMCP Supports Treatment Outcome Prediction

BondMCP tackles the challenge of fragmented data and model integration by offering a streamlined solution to support deep learning models aimed at predicting treatment outcomes. Models like RNNs, transformers, and causal networks all require clean, structured, and longitudinal data to function effectively. However, U.S. health systems often deal with scattered data sources, including EHRs, wearables, lab results, and fitness apps. This lack of integration forces data science teams to spend months preparing data before models can even begin training. BondMCP eliminates this hurdle by unifying data streams through contextual data fusion into a structured timeline, ready for deep learning models to process.

Unified Data Context for Health Optimization

BondMCP creates a comprehensive patient timeline by combining data from various sources, such as:

- EHRs: Diagnosis codes, medication records, and encounter types.

- Wearables: Metrics like sleep stages, HRV, and glucose levels (mg/dL).

- Labs: Results including creatinine levels and lipid panels.

- Intervention Logs: Records of therapy sessions, supplement protocols, or training plans.

The platform resolves patient identities across systems, aligns timestamps, and uses a health-specific ontology to standardize events like medication changes or training modifications. These are represented as intervention tokens with associated time intervals, creating a structured sequence of (state, treatment, outcome) tuples. This format is ideal for sequential models, making it easier for them to process and analyze.

BondMCP also ensures clinical-grade accuracy by validating data through more than 10 medically trained AI models, including Claude, GPT-4, and Titan. This validation minimizes errors and eliminates hallucinations, providing high-quality data for downstream models to predict treatment outcomes with confidence [1].

Real-Time Intervention Adjustments

Once a deep learning model predicts an outcome - say, an RNN forecasting that a patient undergoing internet-based CBT has a low likelihood of improvement by week 8 - BondMCP facilitates real-time adjustments. The platform continuously updates patient data, incorporating metrics like sleep quality, HRV, weekly PHQ-9 scores, and engagement levels. If predictions indicate a risk, AI agents on BondMCP can suggest changes like altering supplement schedules, adjusting training intensity, or flagging the need for more intensive therapy [3].

This system moves beyond static dashboards, enabling near real-time predictions and recommendations. Clinicians can review, modify, or approve these suggestions within their existing workflows, using BondMCP as a support tool rather than an autonomous decision-maker. For causal models like G-Net, which estimate counterfactual outcomes under different treatment strategies, BondMCP’s structured data enables precise queries like, “What happens if we adjust the protocol at week 4 instead of week 8?” The platform then operationalizes the chosen strategy seamlessly [2].

Scaling Precision Health Through BondMCP

Scaling precision health in the U.S. requires managing data from numerous sources, integrating multiple AI models, and adhering to HIPAA compliance. BondMCP simplifies this process with APIs and SDKs that provide cleaned, time-aligned data directly to model-training pipelines [1]. This allows health systems to add new prediction models - whether for cardiometabolic risk, mental health, or post-surgical recovery - without duplicating infrastructure.

Currently trusted by over 50 health systems worldwide, BondMCP handles more than 2.5 million API calls monthly while maintaining 99.9% uptime [1]. The platform also supports agent orchestration, ensuring that specialized AI agents (e.g., those focused on sleep or glucose control) work together without conflicting recommendations. By issuing cryptographic trust certificates for every AI response and validating data through consensus, BondMCP addresses concerns about trust and interpretability [1]. This reliable foundation transforms deep learning insights into actionable, scalable interventions while maintaining compliance and ethical standards discussed earlier in this guide.

Future Trends in Deep Learning for Treatment Outcomes

Building on the deep learning techniques and data integration strategies discussed earlier, upcoming advancements are set to refine how treatment outcomes are predicted, offering even greater precision and adaptability.

Advances in Multimodal and Sequential Models

The future of deep learning lies in multimodal fusion architectures that combine diverse data types like medical imaging, electronic health records (EHRs), genomic information, and wearable device data [4][5]. These systems will employ specialized tools - CNNs for processing imaging data, transformers for decoding diagnosis codes, and temporal CNNs for wearable data streams - to uncover complex interactions across these modalities [4][6][5]. For instance, in U.S. oncology clinics, a single model could integrate PET/CT scans, chemotherapy regimens, lab results (e.g., neutrophil counts), and wearable activity data to predict the likelihood of hospitalization within 14 days [6][5]. Moreover, these models will address challenges like irregular sampling and time intervals, factors already linked to improved prediction accuracy [4]. Large-scale, foundation-style models pre-trained on extensive U.S. EHR datasets are also on the horizon, promising better generalization across hospitals and patient populations compared to current RNN or LSTM-based systems [4].

Personalized and Adaptive Treatment Recommendations

Reinforcement learning (RL) is advancing from theoretical simulations to practical applications using real-world observational data [2][4]. Future RL systems will rely on causal RNNs or transformers to predict how different treatment actions - like adjusting medication doses or intensifying therapy - might affect long-term outcomes such as survival rates or quality-adjusted life years [2][3]. For example, in digital mental health, where deep learning models already achieve 87% accuracy and 0.89 AUROC in predicting depression and anxiety outcomes over six weeks, RL could build on these predictions to recommend higher-intensity care for patients unlikely to respond to current treatments [3]. A promising example is G-Net, which uses RNNs to model dynamic treatment strategies. It has outperformed traditional linear models and other RNN variants in predicting outcomes under alternative interventions [2]. This capability allows clinicians to explore "what-if" scenarios - like modifying insulin dosages or chemotherapy protocols - before implementing changes, with built-in uncertainty metrics to guide decision-making [2].

Opportunities for Broader Integration

Looking ahead, deep learning models will enhance care coordination by continuously assessing risk and treatment responses across large patient populations and directing actionable insights to the right stakeholders [4][5]. For U.S.-based payers and population health teams, multimodal predictors could identify patients at high risk of poor outcomes under standard therapies, flagging them for case management or alternative treatments. Telehealth platforms and digital therapeutics could also leverage real-time predictions to adjust care intensity dynamically, rather than adhering to rigid schedules [3]. Tools like BondMCP exemplify how a shared context layer could enable different AI agents - such as treatment outcome predictors, adherence trackers, and cost-management tools - to work together using a unified patient health representation. This approach aligns with value-based care models while adhering to U.S. regulations around privacy and reimbursement. Additionally, evaluation standards are shifting to include multi-site, temporal, and subgroup validation using geographically diverse datasets. These standards aim to ensure fairness by analyzing performance across race, ethnicity, insurance type, and socioeconomic status, setting pre-defined thresholds to promote equitable outcomes [4][3].

FAQs

How do deep learning models like RNNs and transformers help predict treatment outcomes?

Deep learning models, such as recurrent neural networks (RNNs) and transformers, have become invaluable in predicting treatment outcomes. Their strength lies in their ability to process and analyze complex health data over time, making them particularly effective at uncovering patterns in sequential and contextual information like patient histories, lab results, and real-time health metrics.

With these advanced tools, healthcare providers can generate more precise and tailored predictions about how a patient might respond to a treatment or how a condition could evolve. This level of insight supports better decision-making, leading to improved care strategies and, ultimately, better outcomes for patients.

What challenges arise when using deep learning models in clinical workflows?

Integrating deep learning models into clinical workflows comes with its fair share of hurdles. One major obstacle is data fragmentation. Patient information is often scattered across various systems, making it tough to compile a cohesive dataset for training and implementing these models. On top of that, maintaining data privacy and security is absolutely essential, given the highly sensitive nature of healthcare records.

Another critical challenge lies in model interpretability. For clinicians to trust and rely on a model's recommendations, they need clarity on how those predictions are made. Without that transparency, adoption becomes a tough sell. Lastly, aligning the model's outputs with current clinical workflows is no small task. Seamless integration with the tools and processes already in place is necessary to ensure the technology fits naturally into the existing ecosystem.

Tackling these challenges is crucial for realizing the true potential of deep learning in healthcare.

How does BondMCP enable real-time adjustments to treatment plans?

BondMCP keeps your treatment plan in sync with your health by analyzing data from wearables, lab results, and other sources in real time. With AI-driven insights, it adjusts recommendations to match your current health status and changing needs.

This approach ensures your plan remains tailored and adaptable, making it easier to stay on track and work toward improved health outcomes.