NLP, or Natural Language Processing, is transforming mental health care by enabling chatbots to deliver Cognitive Behavioral Therapy (CBT) interventions. These chatbots address accessibility challenges in mental health services, offering 24/7 support and scalable solutions for issues like anxiety and depression. Key NLP techniques such as intent recognition, sentiment analysis, and conversation context tracking allow chatbots to understand user emotions, maintain meaningful conversations, and personalize therapeutic exercises. While these tools provide cost-effective and private support, they have limitations, such as lacking human empathy and struggling with complex cases. Integrating broader health data systems like BondMCP could further enhance their effectiveness by connecting mental and physical health insights.

Key Takeaways:

- What NLP Does: Enables chatbots to understand language, context, and emotions for mental health support.

- How It Helps: Provides CBT techniques like thought challenging and mood tracking, accessible 24/7.

- Challenges: Limited in handling complex emotions and ensuring data security.

- Future Outlook: Integration with health data systems for more personalized care.

NLP-powered CBT chatbots are a valuable tool for improving access to mental health care, especially for mild to moderate symptoms, but they work best as a complement to professional therapy.

Dr. Lyle Ungar: How to build an LLM-based chatbot for mental health

Key NLP Methods Used in CBT Chatbots

CBT chatbots rely on advanced natural language processing (NLP) techniques to understand users and deliver meaningful mental health support. These methods ensure that interactions remain personalized and responsive, helping users feel understood and supported. Here's a closer look at the key techniques that power these chatbots.

Intent Recognition

Intent recognition enables chatbots to figure out what users are trying to communicate or achieve through their messages. Unlike simple keyword matching, this method focuses on understanding the user’s underlying purpose - whether it’s seeking relief from anxiety, expressing frustration, or requesting coping strategies.

In mental health settings, intent recognition is especially nuanced. For instance, a message like "I can't handle this anymore" could mean the user needs immediate crisis support, is venting frustration, or is looking for guidance. To respond appropriately, the chatbot analyzes the full context, including past interactions and emotional cues.

A great example of this is Crisis Text Line, which uses intent recognition to flag messages containing language patterns associated with suicidal thoughts or severe distress. This helps prioritize cases for human counselors while the AI performs the initial assessment.

The precision of intent recognition has a direct impact on how effective the chatbot is. For example, if a user wants help with a core CBT technique like thought challenging, the chatbot must detect this intent to guide them through the right exercises. Misinterpreted intent, on the other hand, could lead to generic responses that fail to address the user's needs.

This foundation of accurate intent detection sets the stage for the chatbot’s ability to adjust emotionally and contextually, which we'll explore next.

Sentiment Analysis

Building on intent recognition, sentiment analysis allows chatbots to understand the emotional tone of a user’s messages. This goes beyond basic positive or negative labels to identify specific emotions like anxiety, hopelessness, or distress, enabling the chatbot to respond in a way that aligns with the user’s feelings.

"Sentiment analysis, a natural language processing (NLP) technique, has emerged as a promising tool for understanding and evaluating human emotions, particularly in the context of mental health."

- O. E. Olorunniwo, Mayowa Alonge, & Olatunji Isreal, Obafemi Awolowo University [1]

For example, Woebot, a popular mental health chatbot, demonstrated in a randomized controlled trial that users experienced noticeable reductions in depression and anxiety symptoms after just two weeks of interaction [1]. This success is partly due to Woebot's ability to detect emotional distress with high accuracy using BERT-based models, which achieve up to 93% precision [3].

Interestingly, research shows that people are more willing to share vulnerable emotions - like sadness or depression - with chatbots (49.83%) compared to social media platforms (7.53%) [4]. Tess, another AI mental health coach, uses sentiment analysis to tailor its conversations based on users’ emotional states and psychological profiles, leading to improvements in emotional resilience [1].

Cultural differences also play a role in how emotions are expressed. For instance, users in Eastern regions may convey stronger emotional reactions to depression, while Western users are more likely to discuss sensitive mental health topics openly [4]. By picking up on these subtle cues, chatbots can adjust their responses in real time to better meet the emotional needs of users.

Conversation Context Tracking

Conversation context tracking allows chatbots to maintain continuity in their interactions. By remembering previous discussions, tracking therapeutic progress, and building user profiles, this method ensures that sessions feel connected and meaningful over time.

This process involves two levels of memory: short-term memory for keeping conversations coherent and long-term memory for recalling past sessions, mood patterns, and treatment adjustments. For CBT, which often involves step-by-step skill building, this continuity is essential. For example, if a user has practiced breathing exercises in a prior session, the chatbot can follow up to check on progress and suggest advanced techniques if needed.

Ginger, a mental health platform, uses predictive analytics to identify users who may be at risk. By analyzing patterns like increased stress or disrupted sleep over time, the system proactively reaches out to offer support [2]. Similarly, Mindstrong Health takes context tracking a step further by analyzing smartphone keyboard interactions - like typing speed and error rates - to detect changes in cognitive function and mental health [2].

However, balancing detailed context tracking with user privacy is critical. Chatbots must store enough information to provide personalized care while ensuring that sensitive health data remains secure through strong privacy measures.

How NLP Personalizes CBT Chatbot Interactions

Natural Language Processing (NLP) empowers CBT chatbots to create tailored interactions for every user. Instead of relying on generic responses, these systems analyze language patterns, preferences, and emotional cues to deliver a more individualized therapeutic experience. Using techniques like intent recognition and sentiment analysis, chatbots can now offer personalized CBT interventions that feel both relevant and supportive.

Customizing CBT Exercises

NLP allows chatbots to fine-tune CBT exercises based on how users express their thoughts. For example, if someone says, "I always mess things up", the chatbot identifies this as catastrophic thinking - a common cognitive distortion - and suggests thought-challenging exercises to address it.

Chatbots also adapt the style and complexity of interventions to match the user's communication style. A person who uses analytical and technical language might receive structured, worksheet-based exercises, while someone who leans toward emotional storytelling could be guided through visualization techniques or narrative exercises.

Take journaling prompts as another example. Instead of asking broad questions like, "How are you feeling today?", the chatbot uses context from previous sessions to craft specific prompts. If work stress was discussed earlier, the chatbot might ask, "What thoughts went through your mind during that challenging meeting?" This level of specificity makes the exercises more engaging and relevant.

Timing plays a key role too. By analyzing language patterns, NLP can detect when a user is ready to tackle new techniques or when they might be resistant. This helps the chatbot introduce exercises at the right moment, increasing the chances that users will adopt these skills effectively.

Dynamic Response Adjustments

NLP also enables chatbots to adjust their communication style in real time, ensuring responses align with the user's emotional state and progress. For instance, if sentiment analysis detects heightened anxiety or distress, the chatbot shifts to a more supportive tone. The responses might become shorter and more focused on immediate coping strategies to avoid overwhelming the user. On the other hand, when users display confidence and stability, the chatbot can introduce more challenging exercises or dive into deeper therapeutic concepts.

The chatbot also learns from user behavior. If someone tends to respond with brief, hesitant messages, the chatbot simplifies its questions and offers more encouragement. For users who write in detailed, thoughtful responses, the chatbot can engage in more complex therapeutic discussions.

Personalization extends to communication styles and cultural nuances as well. NLP systems can identify whether a user prefers formal or casual language and adjust accordingly. They also recognize culturally specific expressions of distress or coping, tailoring the therapeutic approach to feel more natural and relatable.

Progress tracking is another area where NLP shines. As users begin to use more balanced language or show cognitive flexibility, the chatbot acknowledges these improvements, reinforcing positive change. For example, it might say, "You've been framing your thoughts in a more constructive way lately - great progress!"

Crisis Monitoring and Escalation

NLP plays a critical role in monitoring for signs of crisis, analyzing language for subtle shifts that might indicate a decline in mental health. Beyond detecting explicit phrases like "I feel hopeless", NLP can pick up on more nuanced changes. For example, if a user who typically writes in complete sentences starts using fragmented or disjointed language, the chatbot may prompt additional assessment questions. Similarly, changes in response length, emotional tone, or engagement frequency can signal a need for closer monitoring.

The chatbot tailors its crisis response based on user preferences and past interactions. Some users respond well to direct safety questions, while others may withdraw if approached too bluntly. By learning these patterns, the chatbot adjusts its approach, improving the likelihood of getting an honest answer about the user's state of mind.

In cases of escalating distress, chatbots can implement graduated interventions. Rather than immediately escalating to a human counselor, the chatbot might first try personalized de-escalation techniques. These could include breathing exercises, specific coping statements, or references to personal support systems the user has mentioned in the past.

Over time, NLP systems also learn to distinguish between typical user expressions and genuine crises. For instance, some individuals naturally use dramatic language that might initially trigger crisis protocols. By understanding the user's baseline communication style, the chatbot can reduce false alarms and focus on genuine concerns.

When human intervention is required, the chatbot provides valuable context to counselors or mental health professionals. This includes patterns of communication, effective past interventions, and the user’s typical emotional expressions, enabling a more informed and effective response. These personalized crisis strategies highlight how NLP enhances not just risk detection but also the overall quality of therapeutic support provided by CBT chatbots.

sbb-itb-f5765c6

Benefits and Drawbacks of NLP-Powered CBT Chatbots

NLP-powered CBT chatbots bring mental health support to more people by offering a mix of advantages and limitations. Knowing these trade-offs helps users, therapists, and developers decide how to use these tools wisely.

One of the biggest advantages is accessibility. These chatbots are available 24/7, providing immediate help whenever it's needed. For example, someone dealing with anxiety at 2 AM can access coping techniques instantly without waiting for their next therapy session. This constant availability is especially valuable for people living in rural areas with limited access to mental health professionals or for those who can’t afford traditional therapy.

Chatbots are also scalable and affordable, capable of supporting thousands of users at once. With the rising demand for mental health services and a shortage of therapists worldwide, this scalability fills a critical gap. Additionally, the anonymity provided by chatbots can make it easier for users to share personal details, especially for stigmatized mental health issues.

However, these chatbots come with limitations. One major drawback is their inability to handle complex emotions or situations that require nuanced human judgment. For instance, someone expressing suicidal thoughts might receive a standard crisis response instead of the personalized care and immediate intervention they need.

Another challenge is the lack of genuine empathy. While chatbots can mimic empathetic language, they don’t truly understand human emotions or provide the deep connection that many people seek during difficult times. This becomes especially problematic in crisis situations or when addressing deep-seated trauma.

Comparison of Benefits and Drawbacks

| Benefits | Drawbacks |

|---|---|

| 24/7 Availability - Always accessible for immediate support | Limited Emotional Understanding - Can't truly grasp complex emotions or offer genuine empathy |

| Cost-Effective - More affordable than traditional therapy | Risk of Misinterpretation - May respond inappropriately to complex or nuanced issues |

| Scalability - Can assist thousands of users at once | Lack of Human Judgment - Unable to make clinical decisions or adapt to unique situations |

| Privacy and Anonymity - Encourages openness without fear of judgment | Over-Reliance Risk - May lead users to avoid seeking professional human help |

| Consistent Quality - Delivers standardized, evidence-based CBT techniques | Limited Crisis Management - Not equipped to handle severe mental health crises |

| Personalization - Adjusts responses based on user behavior and preferences | Data Privacy Concerns - Raises questions about the security of sensitive digital records |

| Reduced Stigma - Removes barriers of judgment that deter people from seeking help | Lack of Physical Presence - Can't provide comfort through non-verbal cues or physical presence |

While the table highlights the key pros and cons, there are deeper nuances worth exploring. For example, one major strength of chatbots is their consistency. Unlike human therapists, who may vary in approach or skill level, a well-designed chatbot provides the same evidence-based CBT techniques to every user. This ensures that individuals, regardless of their location or income, receive reliable interventions.

However, this very consistency can also be a drawback. Human therapists excel at detecting subtle cues and adapting their approach when needed, something chatbots simply can’t do. Despite advancements in NLP, chatbots are limited by their programming and may miss critical underlying issues.

Data security is another area of concern. While chatbots offer privacy from human judgment, they create digital records of sensitive mental health information. Users must trust that their data is secure, but security practices vary across platforms, and breaches could expose extremely personal details.

NLP-powered CBT chatbots are most effective when used as complementary tools. They’re ideal for individuals with mild to moderate symptoms or as a stopgap between therapy sessions. However, for those dealing with severe mental health challenges, complex trauma, or active suicidal thoughts, professional human intervention is still essential. Recognizing these strengths and weaknesses is key to refining these tools and integrating them more effectively into mental health care.

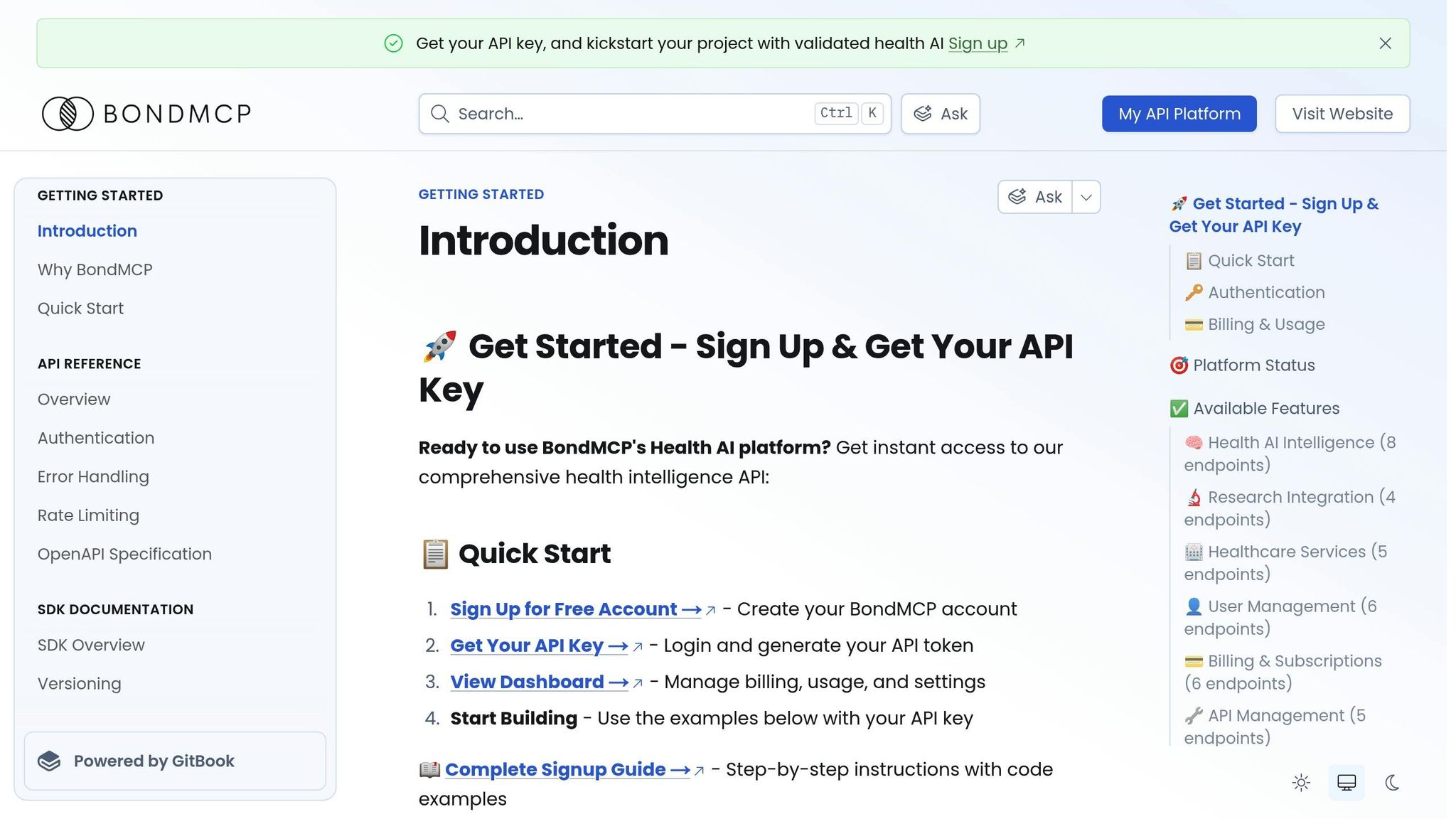

Improving NLP-Driven CBT Chatbots with BondMCP

Traditional CBT chatbots often work in isolation, which limits their ability to consider a user's overall health. BondMCP's Health Model Context Protocol changes this by connecting mental health tools to a broader ecosystem of health data. This integration allows for more personalized care and enhances NLP's ability to deliver nuanced and effective CBT responses. By unifying behavioral and biometric data, BondMCP creates opportunities for interventions tailored to the individual.

Integrating Mental Health Data with BondMCP

BondMCP's shared context layer connects data from sleep trackers, fitness apps, lab results, and supplement plans, giving CBT chatbots a comprehensive view of a user's health. This integration enables chatbots to provide more informed and personalized interventions. For instance, if a user reports feeling overwhelmed, the chatbot can access relevant health data to craft a response that addresses both mental and physical factors.

The protocol uses a health-specific ontology to ensure interoperability between mental health tools and other wellness systems. This interconnected approach allows chatbots to go beyond surface-level interactions and offer responses grounded in a user's broader health profile.

Supporting Precision Mental Health Interventions

With BondMCP, chatbots can deliver more proactive and precise mental health care. Instead of relying solely on what users share during conversations, the system incorporates trends and changes from their overall health data. This enables interventions that adapt to a user's evolving needs.

BondMCP also coordinates between mental health tools and other health optimization systems. For example, if a user's sleep quality or activity levels decline, the chatbot can suggest stress management techniques while other tools address related issues. This collaborative approach eliminates the fragmented care often caused by disconnected health tools.

Additionally, BondMCP's context-aware agents refine CBT interventions by aligning responses with real-time health metrics. This ensures that the chatbot provides timely, relevant, and personalized support.

Supporting Developers and Clinics

BondMCP doesn't just enhance chatbot functionality - it also simplifies development for creators and improves care delivery for clinics. Developers building CBT chatbots can use BondMCP's SDK to streamline tasks like memory management, data routing, and health data integration. This frees them to focus on improving therapeutic algorithms while leveraging a robust ecosystem of interoperable health tools.

The platform's plug-and-play design makes it easy to integrate with a wide range of health apps and devices through a single connection. This reduces development time and complexity while ensuring that mental health tools remain compatible with evolving health systems. For clinics, this streamlined integration improves care coordination and patient monitoring.

Clinics and healthcare providers also benefit from BondMCP's ability to unify previously siloed patient data. Therapists gain access to detailed dashboards that show how mental health interventions interact with overall health, enabling more informed decisions. Automated care coordination between AI tools and clinical systems ensures that cases requiring human attention are flagged promptly, enhancing the quality of care and reducing gaps in treatment.

The Future of NLP and Mental Health Chatbots

The development of NLP-powered CBT chatbots represents a major leap from basic, rule-based systems to more advanced, context-aware therapeutic tools. By combining intent recognition, sentiment analysis, and conversation tracking, these chatbots are now capable of offering meaningful support that aligns with the principles of cognitive behavioral therapy. This progress is paving the way for integrating richer health data into chatbot interactions, enhancing their effectiveness.

With platforms like BondMCP, future CBT chatbots could incorporate a wide range of health data, such as sleep patterns, activity levels, and lab results, to build a more complete picture of the user. This allows for interventions that address both mental and physical aspects of well-being, creating a more tailored approach to care.

Precision mental health is becoming a reality as NLP evolves to link mood patterns with biometric data and lifestyle changes. For example, a chatbot could identify early signs of depression not only through analyzing conversations but also by recognizing patterns in health metrics. This kind of proactive monitoring could help prevent mental health crises, shifting the focus from treating problems after they arise to addressing them before they escalate.

Another exciting development is the push toward system interoperability. Tools like BondMCP aim to eliminate the fragmentation often seen in healthcare systems, enabling seamless collaboration between different technologies. This benefits clinicians by providing richer patient data and frees developers to concentrate on improving therapeutic algorithms, rather than building infrastructure.

As these technologies advance, they also help expand access to mental health care. NLP-driven chatbots, with their ability to provide personalized, round-the-clock support, can be a lifeline for individuals who might not have access to traditional therapy. Their integration with existing healthcare systems ensures they can deliver effective care while remaining accessible to a broader audience.

Looking ahead, the future of mental health care is likely to involve a hybrid model that combines the strengths of human expertise with AI-driven, personalized support. This blend of digital and traditional therapy has the potential to transform how care is delivered, making it more responsive and inclusive.

FAQs

How do NLP-powered CBT chatbots protect user privacy while analyzing emotional cues and conversation context?

NLP-powered CBT chatbots take user privacy seriously, using strong encryption and data anonymization to keep sensitive information safe. These steps ensure that both conversation details and emotional insights stay protected and confidential.

To add another layer of security, these chatbots follow rigorous data storage practices and always seek clear user consent before accessing or processing personal details. They also comply with strict privacy guidelines and leverage AI techniques designed to protect privacy, allowing them to analyze emotional cues without revealing any identifiable information. This careful approach strikes a balance between offering personalized assistance and safeguarding user confidentiality.

How does BondMCP improve the personalization and effectiveness of CBT chatbots for mental health support?

BondMCP works as a context-aware integration layer, seamlessly combining health data, daily routines, and therapeutic interventions. This allows CBT chatbots to provide real-time, personalized support that aligns with each person’s specific health profile.

With BondMCP, chatbots can fine-tune their responses to better match users' needs, offering more targeted and effective cognitive behavioral therapy. This comprehensive approach not only boosts user engagement but also ensures that interventions are meaningful and deliver positive mental health results.

Can chatbots using NLP completely replace therapists, or do some situations still require human involvement?

While chatbots powered by NLP are great for offering accessible and tailored mental health support, they fall short when it comes to replacing human therapists. These tools struggle to grasp complex emotions, show genuine empathy, or handle severe mental health issues effectively.

For critical conditions, deep emotional needs, or situations that demand a high degree of sensitivity and nuanced understanding, human involvement is irreplaceable. Chatbots shine when used alongside therapy, helping with routine mental health upkeep and self-guided exercises. However, they should never be seen as a stand-in for professional care in more serious circumstances.