Your health data is valuable, but who really owns it when AI systems use it? Here’s a quick breakdown:

- Ownership is unclear: Patients, healthcare providers, tech companies, and researchers all claim stakes in health data.

- AI complicates things: AI generates "derived data" from your health records, raising questions about consent and control.

- Privacy concerns: AI can re-identify individuals from anonymized data, exposing gaps in traditional safeguards.

- Legal gaps exist: Laws like HIPAA don’t fully cover AI companies, leaving patients vulnerable.

- Fragmented data is a problem: Scattered health records across systems make it hard to deliver personalized care.

Key Solutions:

- Shared ownership models: Collaboration between patients, providers, and developers.

- Blockchain and data trusts: Secure, transparent systems for managing health data.

- Patient-controlled models: Tools like digital health passports to give patients full control.

Ownership matters because your health data powers AI, and protecting it ensures better care and trust. Read on to explore how this impacts you and the future of healthcare.

Who Owns Your Health Data? - with Mika Newton

Managing these data streams effectively often requires real-time health insights to ensure patients maintain oversight of their information.

Current State of Health Data Ownership

The current state of health data ownership is a tangled web, with various stakeholders controlling different pieces of the same patient’s information. This fragmented landscape creates significant challenges, especially as AI systems become more integrated into healthcare. To understand the complexity, it’s important to examine who holds ownership and the legal frameworks governing health data today.

Who Claims Ownership of Health Data

Ownership of health data is shared among several key players, each with distinct legal claims. For starters, healthcare providers, such as doctors’ offices and hospitals, typically own the physical records of patient information. This means that while the data pertains to the patient, the actual records - like medical histories, test results, and treatment notes - are legally owned by the institutions that create and store them [3].

Government agencies also maintain control over patient data stored in their databases, adding another layer of oversight [3]. Meanwhile, tech companies and AI platforms often assert rights over the processed data and the insights generated by their algorithms. For instance, when AI systems analyze health data to make predictions or recommendations, questions arise: Do these insights belong to the patient, or to the platform that created them? [2]. Researchers, too, access anonymized datasets for studies, but the line between research and commercial use can blur, especially when pharmaceutical or tech companies sponsor such studies.

Marielle Gross, a bioethicist at Johns Hopkins, captures the issue perfectly:

"We're taking bits - whether you want to interpret that digitally, physically - of people and we are creating products and selling it back to them." [3]

This fragmented ownership model makes it difficult for AI systems to compile the cohesive, comprehensive data needed for personalized healthcare.

Laws and Regulations That Apply Today

The legal framework governing health data in the U.S. was largely established before AI became a central player in healthcare, leaving significant gaps. The Health Insurance Portability and Accountability Act (HIPAA) is the cornerstone of federal regulations, focusing on protecting patient information held by traditional healthcare entities like hospitals, doctors, and insurers. HIPAA’s Privacy Rule ensures that individually identifiable health information is safeguarded.

However, HIPAA doesn’t extend to many AI companies, leaving them outside its jurisdiction. To fill this gap, the Federal Trade Commission (FTC) has taken action, using Section 5(a) of the FTC Act and the Health Breach Notification Rule. For example, in 2021, the FTC reached a settlement with Flo Health Inc. for allegedly sharing users’ personal health data with third parties without proper consent.

The Food and Drug Administration (FDA) also plays a role, particularly in regulating digital health devices and AI-powered software. Yet, the FDA acknowledges that its traditional regulatory model wasn’t designed for adaptive AI technologies. As the agency explains:

"The traditional paradigm of medical device regulation was not designed for adaptive AI/ML technologies, which have the potential to adapt and optimise device performance in real-time to continuously improve healthcare for patients" [2.5].

State laws, such as California’s Consumer Privacy Act (CCPA), sometimes impose stricter rules than federal regulations. However, the rapid growth of the digital health market - expected to reach between $54 billion and $95 billion by 2025 [1.3] - has far outpaced these regulatory frameworks. This lag leaves critical gaps in AI-specific governance, complicating efforts to integrate health data effectively.

Problems with Scattered Health Data

The fragmentation of health data creates numerous challenges for patients, providers, and AI systems. One of the most pressing issues is the lack of interoperability, which costs the U.S. healthcare system over $30 billion annually [4]. Patient data is scattered across hospitals, clinics, labs, and even wearable devices, often stored in ways that make it difficult to consolidate.

Current interoperability standards tend to prioritize data exchange between institutions rather than empowering patients to access their complete medical histories. This can pose serious risks, especially in emergencies where timely access to comprehensive data could save lives [4].

Organizational silos exacerbate these problems. Only 18% of healthcare organizations report having a dedicated data governance team, while 63% of health system executives believe that clearly defining data ownership would improve care coordination [6]. Without proper governance, "turf wars" between organizations can obstruct data sharing and collaboration [7].

The financial toll is also substantial. Duplicate records add about $1,950 per inpatient stay and over $800 per emergency department visit [6]. Privacy breaches further complicate the issue, with nearly 50 million Americans affected by health data breaches in 2022. This vulnerability underscores the need for secure agent memory protocols to protect sensitive information. This heightens caution around sharing data, even when it could improve care [6].

Richie Etwaru, founder and CEO of Hu-manity.co, highlights the core challenge:

"Consumers want agency over their data, and institutions want access to data. Consumers expect ethics and reciprocity, and institutions struggle to keep up with emerging regulations. Ownership is the platform of clarity needed to further discussions around data agency, data ethics, and data reciprocity." [5]

For AI systems, which rely on unified and high-quality data, this fragmentation is a major roadblock. Without cohesive data, delivering accurate, personalized healthcare insights becomes far more difficult.

Key Problems in Health Data Ownership

The fragmented nature of health data ownership creates a host of challenges, especially as artificial intelligence (AI) becomes more integrated into healthcare. These issues go beyond technical hurdles, touching on consent, intellectual property, and the maze of global regulations - affecting patients, healthcare providers, and tech companies alike.

Unclear Patient Consent Issues

One of the most pressing concerns in modern healthcare is ensuring patients provide clear, informed consent for the use of their health data in AI applications. The rapid pace of AI development has outstripped existing consent frameworks, leaving healthcare providers navigating uncertain waters.

Trust plays a significant role here. Surveys show that patients generally trust healthcare providers more than tech companies, reflecting ongoing fears about how data is handled and secured. This trust gap is compounded by the opaque nature of many AI systems. Providers themselves often lack sufficient training to explain how AI works, making it harder for patients to grasp what they’re agreeing to.

Media portrayals of AI further muddy the waters. Sometimes, AI is hyped as flawless; other times, it’s framed as a potential threat. These extremes can skew patient expectations and make meaningful consent discussions more challenging. Adding to the complexity, AI health apps and chatbots frequently update their user agreements, often without revisiting patient consent.

To address these issues, healthcare providers should take a proactive approach. They need to clearly explain how AI systems function, share their own experiences with the technology, discuss risks and benefits compared to traditional methods, outline human oversight roles, and emphasize the safeguards in place to protect data privacy. Without this transparency, consent becomes less about patient choice and more about navigating legal fine print.

These consent challenges inevitably lead to bigger questions about who owns the insights generated by AI.

Who Owns AI-Generated Health Insights?

When AI systems analyze patient data to produce insights, recommendations, or predictions, the question of ownership becomes murky. Patients, providers, and developers all have stakes in the data, making it difficult to determine legal rights. This ambiguity can discourage patients from engaging with AI-driven health programs.

The issue becomes even more complex when AI combines behavioral data in health agent workflows from multiple patients to create personalized insights. This raises thorny questions about intellectual property and the commercial value of patient data. As Paul, a patient advocate, aptly put it:

"Emotion is stronger than logic here" [9].

Different Rules Across Countries

AI development operates on a global scale, but privacy and security regulations vary widely across countries. Over 120 nations have their own rules, creating a compliance minefield for AI health platforms. The financial risks are significant, with penalties for non-compliance differing dramatically by region:

| Region | Regulation | Maximum Fine | Focus |

|---|---|---|---|

| European Union | GDPR | €20 million or 4% of global turnover | Strict consent and comprehensive protection |

| California, US | CCPA/CPRA | $7,500 per violation | Transparency and opt-out options |

| Canada | PIPEDA | $100,000 CAD per violation | Fairness and accountability |

| Brazil | LGPD | 2% of revenue, up to R$50 million | Broad personal data protections |

Beyond financial penalties, cross-border data sharing adds another layer of complexity. Different countries have varying laws for handling personal health information. For example, the European Union's GDPR provides stringent protections, while the United States relies on frameworks like HIPAA. AI systems, which require diverse datasets to reduce bias, must navigate these legal differences when incorporating data from multiple regions.

For AI companies, this patchwork of regulations means carefully mapping data flows, tailoring policies to regional requirements, and implementing robust data minimization practices. Comprehensive privacy training for staff is also essential. However, these measures can be prohibitively expensive for smaller developers, potentially slowing progress in the field.

Tackling these challenges is critical to creating ownership models that respect patient rights and support innovation in healthcare AI.

sbb-itb-f5765c6

New Approaches to Health Data Ownership

The question of who owns health data in AI systems is a tricky one, and it has led to some creative solutions that go beyond the usual options of privatization or public control. Instead, newer approaches focus on collaboration, transparency, and giving patients more control. These strategies aim to balance the needs of patients, healthcare providers, and AI developers, creating a system that works for everyone.

Shared Ownership Models

Shared ownership models shift the focus from assigning data to a single entity to fostering collaborative responsibility. In these frameworks, patients, healthcare providers, and AI developers share rights and obligations, working together to clarify how data is used and accessed. This approach tackles concerns about vague consent and ensures all parties are on the same page.

One practical way to implement this is through governance committees that include representatives from all stakeholders. These committees oversee data use and ensure that benefits are distributed fairly. For these models to succeed, clear communication about how data flows, what insights are generated, and how benefits are shared is key. Alongside these collaborative efforts, technology plays a big role in securing and managing health data.

Blockchain and Data Trust Solutions

Blockchain technology and data trust frameworks provide technical tools to improve both the security and transparency of health data ownership. Estonia’s adoption of blockchain, led by Guardtime in 2008, stands out as an early example of how blockchain can give citizens secure, unchangeable access to their health records[11]. Over time, blockchain systems have evolved to tackle challenges like fragmented data, limited interoperability, and security risks[12].

Data trust frameworks go hand in hand with blockchain by focusing on data quality and reliability. As Talend describes it:

"Data trust means having confidence that your organization's data is healthy and ready to act on."[10]

Institutions like Beneva have shown how these frameworks can work in practice. By unifying their data management systems, Beneva tripled customer win-back conversions, demonstrating the value of clean, well-organized data[10]. These technical advancements pave the way for even more patient-centered solutions.

Patient-Controlled Ownership Models

Patient-controlled ownership models take things a step further by making patients the main decision-makers when it comes to their health data. These systems replace the "pull" model - where patients must request access to their records - with a "push" model that automatically provides data in easy-to-use formats. This not only addresses privacy concerns but also creates opportunities for patients to actively participate in health data markets[13].

One example is digital health passports, which allow patients to securely share their comprehensive health records. Another is the HIE of One model, which lets patients retain ownership of their data while still supporting clinical needs. This approach enables clinicians to manage teaching files and AI systems without taking away patient control[14].

For these models to work, interoperability is crucial. Health systems need to support smooth data sharing across different providers and platforms while ensuring patients can control who accesses their information. Educating patients about how consent works and how to use tools like digital health passports is equally important. When patients own their data, they can even choose to participate in research studies or AI projects on a compensated basis, rather than simply being unpaid data sources[13]. This approach not only empowers patients but also encourages them to take a more active role in their healthcare.

Together, these new strategies highlight the importance of trust, transparency, and technical innovation in creating a health data ecosystem that benefits everyone involved in AI-driven healthcare.

How BondMCP Addresses Health Data Ownership

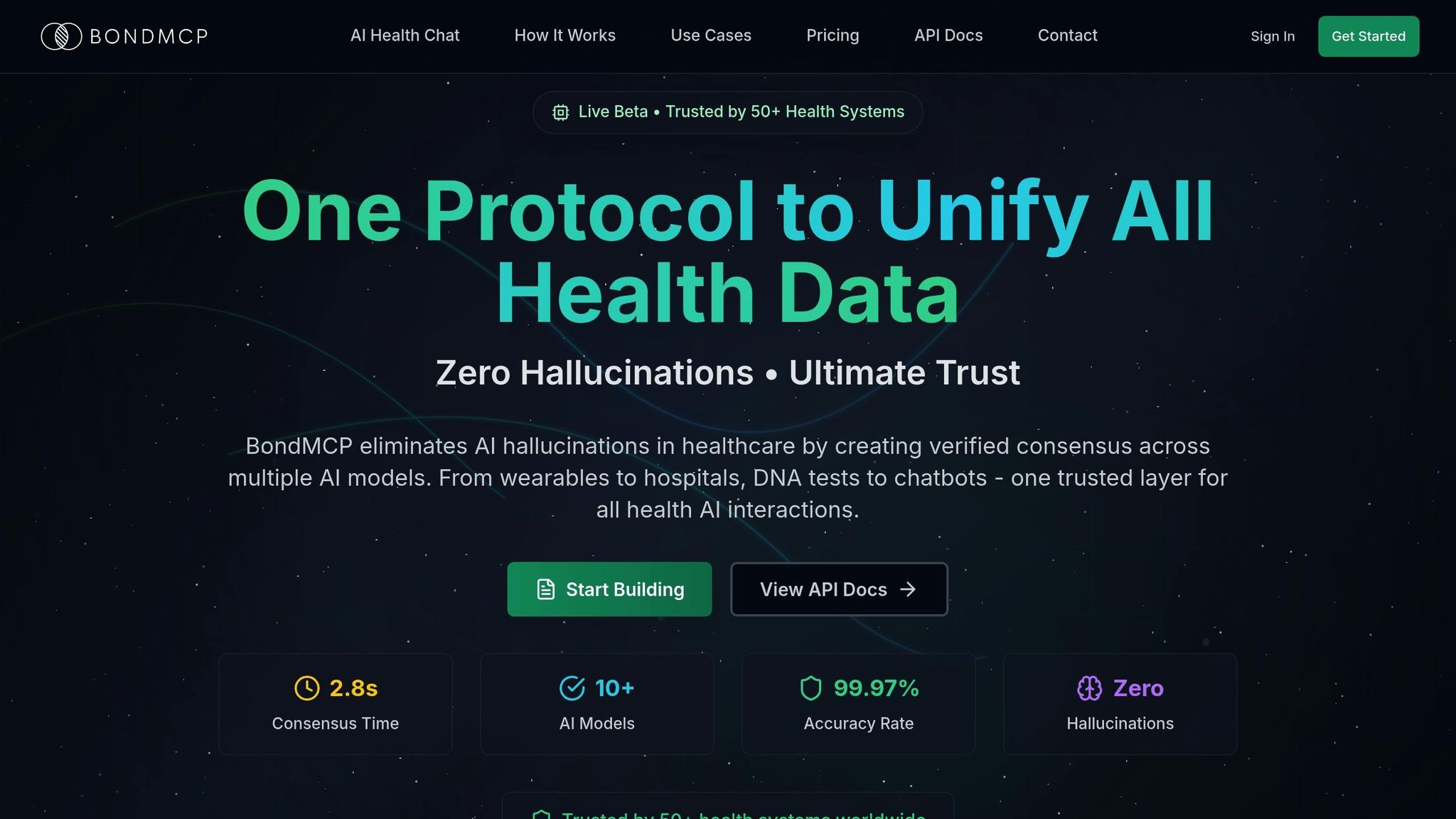

BondMCP tackles the challenge of fragmented health data and unclear ownership by bringing everything together in one patient-centered system. It integrates data from various sources, ensuring patients retain control while leveraging AI to provide meaningful insights.

How BondMCP Connects Different Health Data Sources

Health data often exists in silos - scattered across wearables, electronic health records (EHRs), lab results, and personal logs. BondMCP consolidates this scattered information into a unified framework, making it easier to manage and interpret[16]. By standardizing data and using a real-time consensus engine with 99.97% accuracy in under three seconds, it ensures reliable and verified health insights[15].

This seamless integration not only simplifies data management but also empowers patients by laying the groundwork for tailored healthcare solutions.

Giving Patients Control and Personalized Care

BondMCP puts patients in the driver’s seat, focusing on privacy and personalized healthcare[17]. Its AI agents synthesize data - like sleep habits, lab tests, and fitness routines - into actionable, customized health recommendations[16].

The platform’s commitment to security is evident in its compliance with HIPAA, SOC 2 certification, and GDPR readiness[15]. Every interaction is secured with cryptographic validation, offering trust certificates and audit trails for all medical insights.

"BondMCP eliminates AI hallucinations in healthcare by creating verified consensus across multiple AI models. From wearables to hospitals, DNA tests to chatbots - one trusted layer for all health AI interactions."

- BondMCP[15]

Tools for Clinics and Developers

Beyond empowering patients, BondMCP provides clinics and developers with tools to enhance healthcare delivery. Subscription plans range from $79 to $999 per month, with a free tier available for smaller operations[17]. Clinics benefit from the platform’s interoperability, which integrates seamlessly across devices and systems, ensuring providers gain insights while patients maintain control of their data. Trusted by over 50 health systems worldwide[15], BondMCP’s structured protocol and SDK allow developers to create context-aware, interoperable, and health-literate applications.

Through APIs that enable interoperability, developers can quickly integrate BondMCP into any health app, reducing technical complexities while staying compliant with privacy laws. Whether for solo practitioners or large enterprises, the platform scales effortlessly, setting consistent standards for health data ownership across the industry[17].

Conclusion: Balancing Progress with Data Rights

The future of healthcare AI hinges on finding the right balance between technological advancements and protecting patient rights. While AI thrives on access to health data to improve care, individuals must retain control over their personal information.

Patient data holds immense value - it's estimated to be 50 times more valuable than financial data[18]. However, trust in how this data is handled remains fragile. In fact, only 11% of American adults are comfortable sharing their health data with tech companies[1]. This hesitancy underscores the need for robust systems to safeguard privacy.

The urgency for clear frameworks is further emphasized by the alarming frequency of data breaches. In 2023 alone, the HHS Office for Civil Rights reported over 239 breaches, impacting the health data of more than 30 million Americans. These incidents highlight why establishing transparent ownership and security measures is critical for the future of healthcare AI.

Looking ahead, fostering transparency, empowering patients with control over their data, and ensuring fair benefits from digital health initiatives will be essential. This approach creates a positive feedback loop: better data leads to enhanced AI, which in turn improves healthcare outcomes[8].

As we've explored, achieving this balance requires addressing issues like data fragmentation, consent challenges, and emerging ownership models. A unified, patient-first approach is key to unlocking the full potential of AI in healthcare.

Key Points to Highlight

- Patient Agency and Consent: Healthcare systems must prioritize patient control by implementing strong security protocols, conducting AI impact assessments, and offering clear, user-friendly options to opt out of data sharing[1].

- Policy and Regulation: Policymakers must establish transparent laws that protect privacy while enabling responsible AI development. Clear ownership guidelines can promote innovation without compromising patient trust[20].

- Education and Clarity: Healthcare providers should provide straightforward terms and educational tools to help patients understand how their data contributes to predictive analytics and care improvements[19].

- Patient-Centered Platforms: Solutions like BondMCP demonstrate how unified platforms can consolidate fragmented data while maintaining strict privacy and ownership standards.

Ultimately, success depends on collaboration. Technology developers, policymakers, legal experts, and the public must work together to ensure data ownership is equitable and AI development is responsible. Only through this collective effort can we create a healthcare system that champions both progress and patient rights.

FAQs

Who owns your health data when AI is involved?

The question of who owns health data in AI systems is anything but straightforward. It involves a web of stakeholders, including patients, healthcare providers, and AI developers. While patients often believe they have ownership of their personal data, the reality becomes murky when AI enters the picture. Data may be processed, shared, or even repurposed in ways that aren't always clear or well-communicated.

AI also adds another layer of complexity to the issue of consent. Many patients might not fully grasp how their data will be used, particularly when it’s leveraged to train AI models. Even when data is anonymized, there’s still a risk of re-identification, which can compromise privacy and create new vulnerabilities.

To address these concerns, there’s an increasing push for transparent frameworks that protect patient rights, ensure informed consent, and promote ethical data practices. These steps are critical for building trust and maintaining accountability in AI-driven healthcare systems.

How can we address the challenges of fragmented health data ownership?

Addressing the issue of fragmented health data ownership calls for solutions that emphasize openness, teamwork, and putting patients in control. One way to tackle this challenge is by implementing shared ownership models. These frameworks bring patients, healthcare providers, and developers together to manage data in a way that promotes accountability, ethical use, and easily understood access rights.

Another approach involves leveraging secure technologies like blockchain. These tools can protect sensitive health data while allowing controlled sharing among relevant stakeholders. On top of that, integrating health data systems and improving communication across healthcare providers can help minimize fragmentation. This ensures that everyone involved in a patient's care has access to accurate and complete information.

Equally important is focusing on patient consent and ethical data collection practices. Building trust in this way encourages individuals to share their data, benefiting their own health while also contributing to advancements in medical research and care.

How can patients make sure their health data is handled securely and ethically in AI systems?

Patients can take proactive steps to ensure their health data is handled securely and responsibly when used in AI systems. Start by asking direct questions: How will my data be used? Who will have access to it? What measures are in place to protect my privacy? Clear communication with your healthcare provider is essential.

Pay attention to whether organizations implement strong security protocols, like advanced encryption methods and strict access restrictions. It’s equally important to know your rights regarding data ownership and consent. Be sure you’re aware of how your information might be shared with third parties and any associated risks.

Advocating for systems that emphasize privacy, ethical practices, and compliance with regulations ensures you maintain control over your personal health information.